Landmarks in haemophilia care

The Babylonian Talmud, written in the 5thcentury and recording a ruling made in the 2ndcentury, is acknowledged as the source of the first documented mention of a bleeding disorder that may have been haemophilia.

The reference comes in the form of advice in the Yevamot tractate,1in a section that primarily addresses inconsistency in rabbinical statements – specifically, whether an event should occur two or three times before being accepted as proof. Rabbi Judah the Patriarch stated:

If a woman circumcised her first son and he died as a result of the circumcision, and she circumcised her second son and he also died, she should not circumcise her third son, as the deaths of the first two produce a presumption that this woman’s sons die as a result of circumcision.

However, Rabbi Simon ben Gamaliel affirmed a general point of Talmudic law: that three events are required:

She should circumcise her third son, as there is not considered to be a legal presumption that her sons die from circumcision, but she should not circumcise her fourth son if her first three sons died from circumcision.

In the 11thcentury, Rabbi Isaac Alfasi confirmed Rabbi Judah’s view.2

The 12thcentury physician Moses Maimonides, in a commentary again focusing on the risks of circumcision, noted that children may be at risk through their mother, even when they have different fathers; transmission of the risk (not haemophilia) via the father was recognised in the 16thcentury by Rabbi Joseph Karo.2

The first description of haemophilia is credited to the surgeon Al-Zahrawi (936‒1013 AD), also known as Albucasis.3-5He described a fatal ‘blood disease’ affecting only males, in which minor trauma provoked prolonged bleeding. Observing the pattern of occurrence in one village, he deduced that the disorder was inherited.

The Book of Matthew in the New Testament of the Bible mentions a woman with a lifelong bleeding disorder who was healed by Jesus. The King James Bible states:6

And, behold, a woman, which was diseased with an issue of blood twelve years, came behind him, and touched the hem of his garment:

For she said within herself, if I may but touch his garment I shall be whole.

But Jesus turned him about, and when he saw her, he said, Daughter, be of good comfort; thy faith hath made thee whole. And the woman was made whole from that hour.

References

- Yevamot 64b. Babylonian Talmud. William Davidson Edition (accessed 26 February 2019).

- Rosner F. Hemophilia in the Talmud and rabbinic writings. Ann Intern Med 1969; 70: 833-7.

- Kaadan AN, Angrini M. Who discovered haemophilia? (accessed 26 February 2019).

- Rosendaal FR, Smit C, Briët E. Hemophilia treatment in historical perspective: a review of medical and social developments. Ann Hematol 1991; 62: 5-15.

- Ingram GI. The history of haemophilia. J Clin Pathol 1976; 29: 469-79.

- Matthew 9:20-22. King James Bible (accessed 26 February 2019).

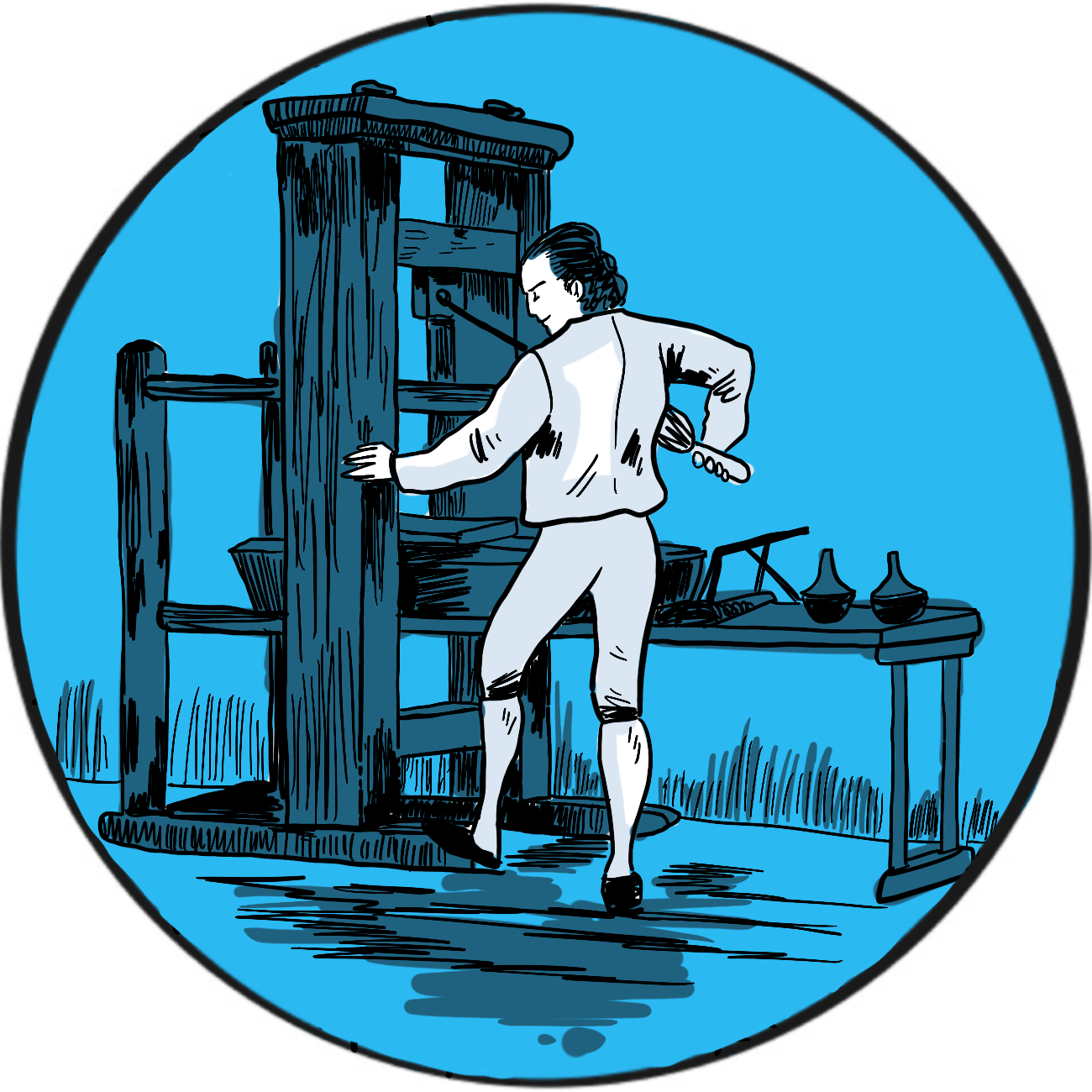

Control of the distribution of knowledge is a powerful asset, both to the purveyors of knowledge and the people who provide the means to disseminate it. The German craftsman Johannes Gutenberg developed the first printing press in secret – not to avoid suppression by the elite of the age fearful of a threat to their power, but from his business partners who wanted a share of the proceeds.

Gutenberg was an inventor and entrepreneur. According to Encyclopaedia Britannica, he financed his activities using advances from three investors.1They agreed a contract stating that, in the event of a death, his heirs would be compensated financially but would not inherit any shares in the enterprise. When one died, his family challenged the contract in court; they lost but the proceedings revealed Gutenberg had been concealing his work on a printing machine from his investors. His innovations included a soft metal alloy to make reusable type, an oil-based ink and a press that could apply even pressure to vellum or paper.

In 1450, keen to refine his designs, Gutenberg subsequently borrowed more from Johann Fust, a financier in Mainz, Germany. But it seems Gutenberg was a perfectionist and Fust, weary of waiting for a return on his investment, successfully sued Gutenberg for repayment with interest. The court documents have been preserved and provide clear evidence that Gutenberg developed the first known printing press, though he lost it to Fust in 1455.

It is possible that Gutenberg printed indulgences (letters of forgiveness sold by the Catholic Church) as early as 1452. His only major printing enterprise was a Latin edition of the Bible, which he displayed to potential investors in 1454.

The first printed book to bear the title of its printer was a Psalter (a book of psalms for public worship). Published in 1457, it carries the names of Fust and his son-in-law, Peter Schöffer, whom Gutenberg had employed. However, it is believed that Gutenberg may have been involved its production.

Gutenberg died in Mainz in 1468. William Caxton introduced the printing press to London in 1476, predominantly publishing the works of Chaucer, popular poets, chivalric romances and histories, mostly in English.

References

- Lehmann-Haupt HE. Johannes Gutenberg. German printer. Encyclopaedia Britannica. Last updated February 2019 (accessed March 2019).

Further reading

The British Library. Treasures in Full: Gutenberg’s Bible (accessed March 2019).

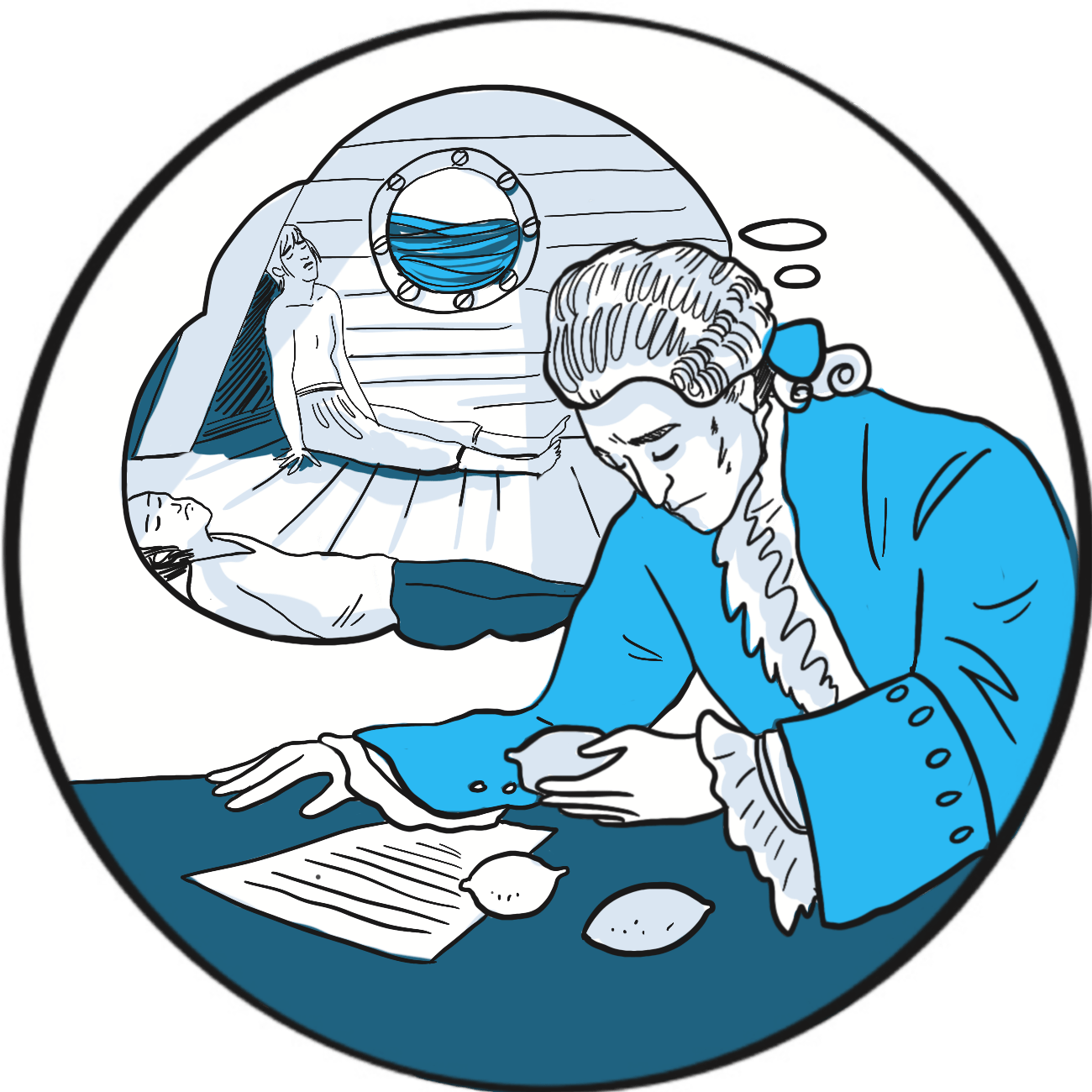

It’s widely known that, in the 19thcentury, the Americans christened British sailors ‘Limeys’ after the Royal Navy’s alleged fondness for stocking the fleet with limes to prevent scurvy during long voyages. The credit for introducing this practice is sometimes laid at the door of James Lind (1716–1794), an Edinburgh physician who served with the Navy between 1738 and 1748. But Lind’s true innovation was to carry out and document one of the earliest prospective controlled clinical trials.

Vitamin C is essential for the production of structurally normal collagen. Deficiency causes skin lesions, bleeding from the mucosae and into joints, anaemia, delayed wound healing, cardiac failure, hypotension and death.1Sailors had known for centuries that consumption of citrus fruit prevented scurvy: the Portuguese explorer Vasco da Gama recorded foraging for citrus fruit in 1498, and it was described by John Woodall, the first surgeon-general of the East India Company, in 1617.2

In May 1747, Lind was serving on the HMS Salisburyas part of a blockade in the English Channel when some of the crew developed scurvy.2He selected twelve and assigned two each to a different treatment popular at that time – cider, elixir vitriol (dilute sulphuric acid), vinegar with meals, sea water, a herbal paste or citrus fruit (two oranges and one lemon, though this continued for only six days before stocks ran out). Lind tried to control for possible variables: the men were as similar as he could find, lived in the same accommodation and ate the same food. Of the two men lucky to have fruit, one was fit for duty after six days and the other was well enough to tend to his comrades.

Lind’s experiment is an example of testing a hypothesis in the scientific way we now recognise as a clinical trial. He described his findings in A treatise of the scurvy. In three parts. Containing an inquiry into the nature, causes and cure, of that disease. Together with a critical and chronological view of what has been published on the subject (1753). The publication also included another innovation: a systematic review of previously published literature.

Despite what seems like convincing evidence, Lind’s finding was not put into practice immediately and the Royal Navy did not order supplies of lemon juice until 1795.3 Surprisingly, Lind never strongly advocated citrus fruit to prevent scurvy and trials of other remedies continued into the late 18thcentury.4 This is an early example of a challenge that innovators face today – translating scientific discoveries into practical interventions.5 Many other factors contributed to ill health aboard ship, including cramped accommodation, poor quality fresh water, and infectious diseases such as malaria, typhus and yellow fever. It may have been difficult to see the efficacy signal amongst all the noise – another problem facing modern science!

References

- Byard RW, Maxwell-Stewart H. Scurvy-characteristic features and forensic issues. Am J Forensic Med Pathol 2019; 40: 43-6. doi: 10.1097/PAF.0000000000000442.

- Milne I. Who was James Lind, and what exactly did he achieve? JLL Bulletin: Commentaries on the history of treatment evaluation. 2012 (accessed 26 February 2019).

- Houlberg K, Wickenden J, Freshwater D. Five centuries of medical contributions from the Royal Navy. Clin Med (Lond) 2019;19:22-5. doi: 10.7861/clinmedicine.19-1-22.

- Lamb J. Captain Cook and the scourge of scurvy. Updated February 2011 (accessed 26 February 2019).

- Rapport F, Clay-Williams R, Churruca K, et al. The struggle of translating science into action: Foundational concepts of implementation science. J Eval Clin Pract 2018; 24: 117-26. doi: 10.1111/jep.12741.

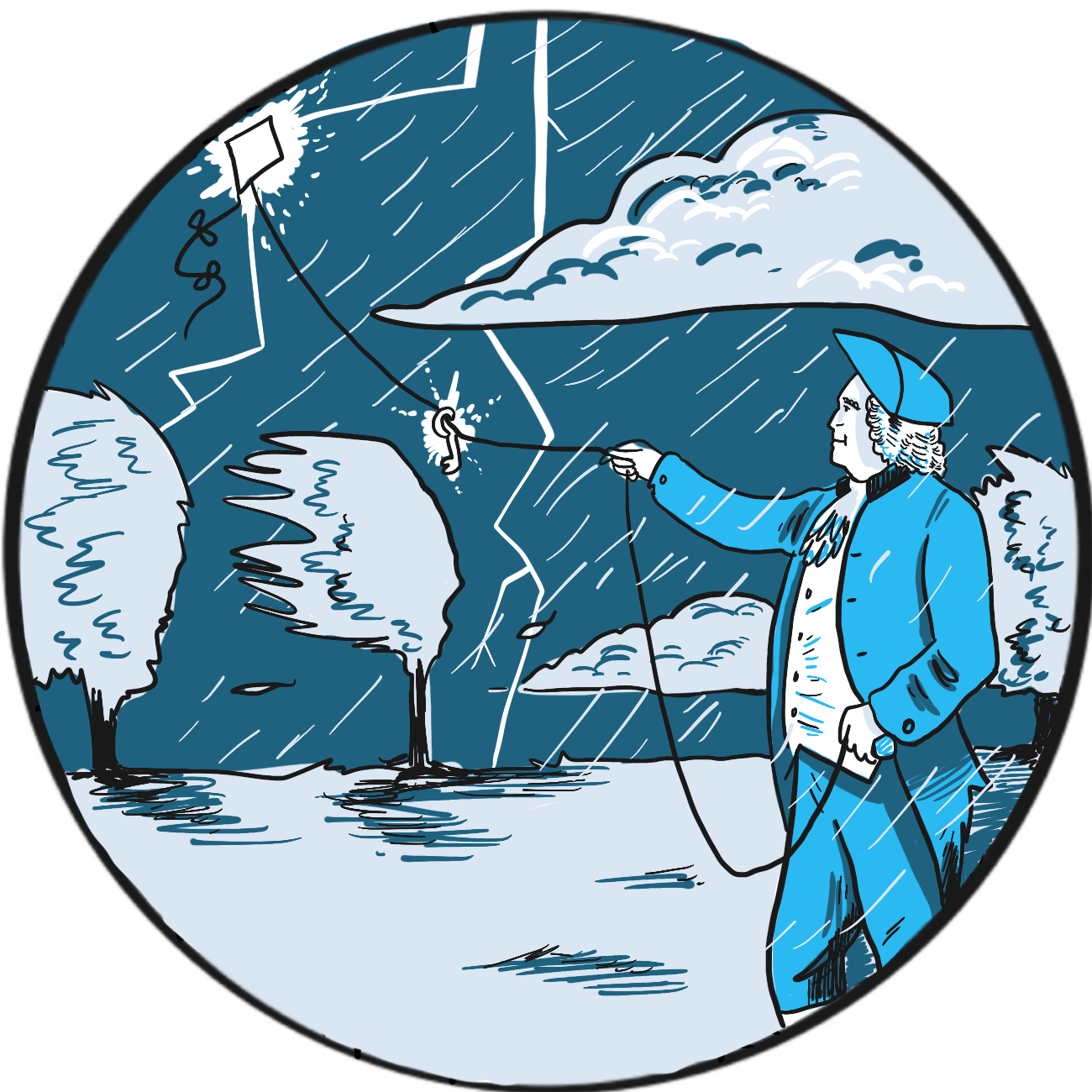

It is not readily apparent why the preference for a pointed or blunt lightning rod should be a sign of colonial rebellion ‒ but that, according to the Franklin Institute in Philadelphia, United States, was one result of Benjamin Franklin’s experiments on electricity in 1752.1

Franklin (1706–1790) was a successful publisher who, in 1748, retired from commerce to devote his time to politics and experiments on electricity. He is credited with the discovery that lightning is electricity, but has several other innovations to his name, including the first use of the terms ‘positive’ and ‘negative’ in reference to electrical charges, and the concept of a battery.

Having given himself a substantial shock in the course of his research, Franklin was familiar with the properties of electricity and recognised similarities between the electrical discharges he created in his laboratory and the appearance of lightning. He wasn’t alone in thinking this and, by the 1750s, several scientists had become interested in protecting tall buildings from lightning strikes.

Franklin proposed that a sharply-pointed iron rod could draw a discharge down from a storm and suggested attaching one to the spire of a church that was due to be built (Philadelphia being a largely flat state).2 His plan was presented to the Royal Society in London and greeted with ridicule, but it was successfully carried out in 1751 by the French scientist Thomas-François Dalibard.3

There is some doubt as to whether Franklin actually conducted his experiment himself; however, it is said that, unaware of Dalibard’s success and having lost patience waiting for the church to be built, he resolved that a kite would do just as well. Helped by his son, he attached a pointed rod to the kite and connected it via a metal line to a key in a Leyden jar (a device to store static electricity, similar in principle to a basic capacitor). The men retreated to a dry barn for safety. The kite was not struck by lightning but drew down electrical charge, as Franklin discovered when he moved his hand near the key.

The story goes that Franklin then became a strong advocate of pointed lightning rods, contrary to the prevailing fashion for blunt rods – a fashion supported by no less than the British monarch George III.1 When Philadelphia’s new buildings were equipped with pointed rods, it was perceived as an anticolonial gesture.

Franklin subsequently entered politics. In 1776, he helped to draft and was one of the signatories of the Declaration of Independence and, in 1783, he was a signatory of the Treaty of Paris that ended the American War of Independence.4

References

- Franklin Institute. Franklin’s lightning rod (accessed March 2019).

- Benjamin Franklin Historical Society. Kite experiment (accessed March 2019).

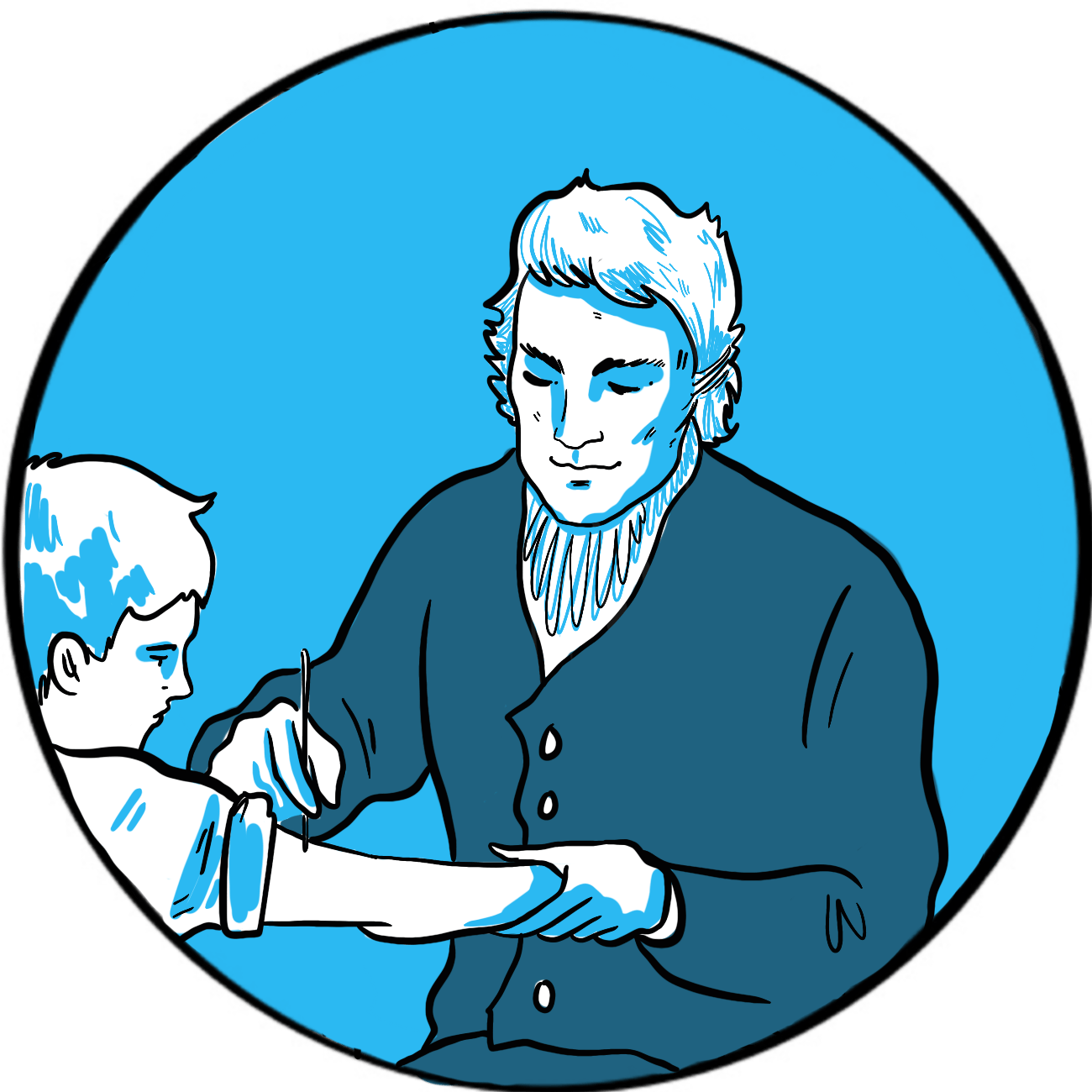

The World Health Organization (WHO) says that vaccination is arguably the most cost-effective single health intervention we have, saving around 2–3 million lives per year worldwide.1 Edward Jenner (1749–1823) is credited with discovering vaccination but his contribution was (as is the way with scientific innovation) more of an improvement – on current practice albeit a very significant one. It is also a story revealing the lack of humanity shown by the wealthy and educated for people less privileged than themselves.

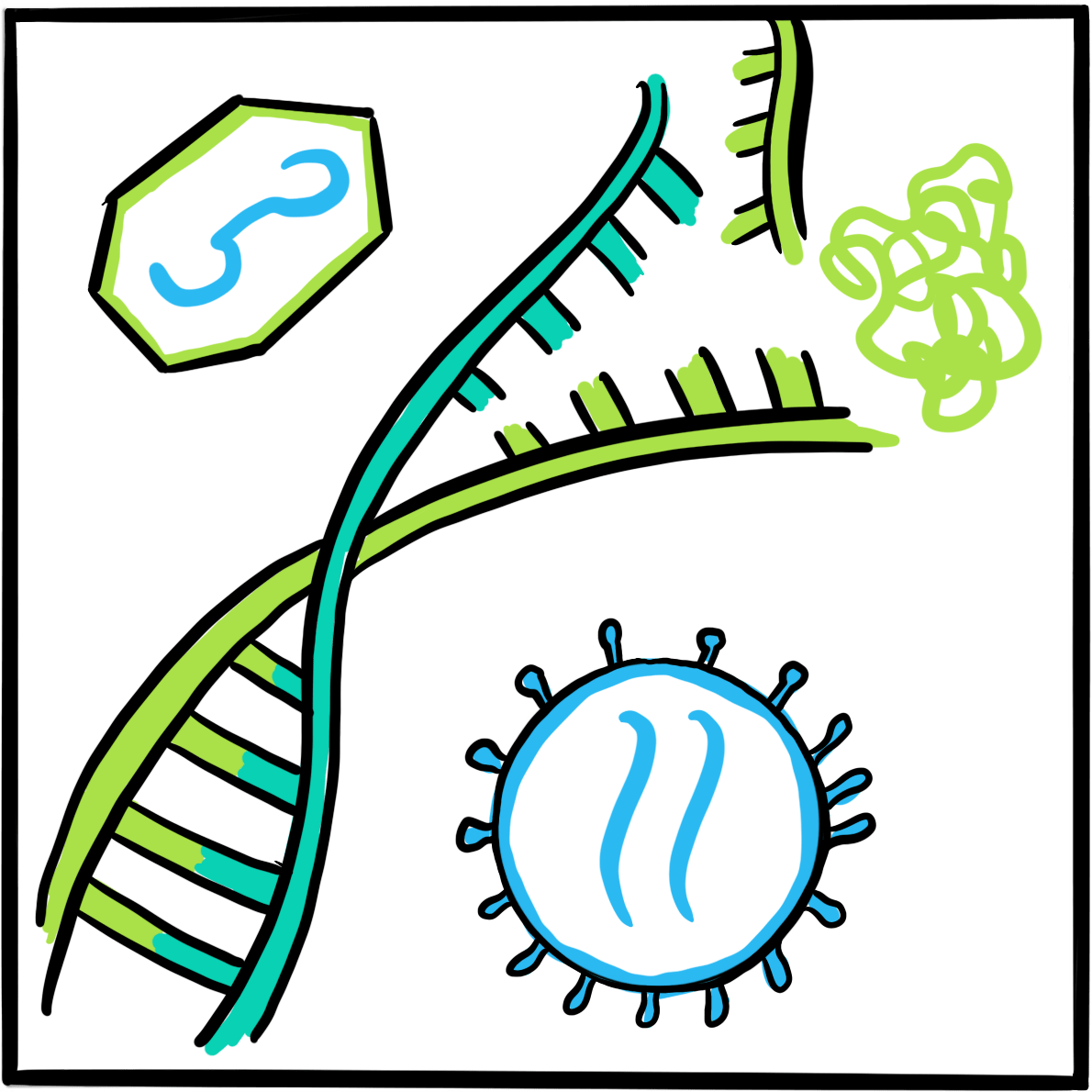

Smallpox is an infection by the Variola virus that causes fever and a pustular rash, beginning in the mouth and spreading all over the body.2 About 30% of infected people die – historically, reported mortality rates were above 80% among children3‒and those who survive are left with disfiguring scars and sometimes blindness. It is highly infectious. The WHO began a global eradication plan in 1959 and eventually declared the world free of smallpox in 1980.2

Smallpox was introduced into Europe between the 5thand 7thcenturies. It was a major killer, with no respect for class or wealth.3 One of the treatments long practised in Africa, India and China was inoculation – the deliberate introduction of material from a smallpox lesion into the skin of an uninfected person, with the aim of making them immune to infection. Inoculation was introduced into Europe at the turn of the 18th century, where it became known as variolation after the smallpox virus. It was not a benign procedure: 2%‒3% of people subsequently died from smallpox, and others contracted syphilis and tuberculosis from contaminated blood.

Variolation was accepted by English society thanks to the advocacy of Lady Mary Wortley Montague, wife of the British Ambassador to Turkey and herself a survivor of smallpox, who had her son and daughter inoculated by the embassy surgeon Charles Maitland. This sparked interest from the royal family, who permitted an experiment on prisoners in Newgate gaol and on orphaned children. When none died and several did not contract smallpox when exposed to it, Maitland successfully variolated the two daughters of the Prince of Wales in 1722.

Jenner was therefore practising in a culture where variolation was accepted, and he himself had been successfully inoculated at age four. His contribution was to establish the principle of using a safer source of antigenic material. As a country doctor, he had noticed that cow maids did not often develop smallpox. They did, however, catch cowpox from the cattle they milked. Cowpox is a benign infection in humans, causing only a mild fever and an unsightly rash. It was believed that, after recovery from the infection, a person never contracted cowpox again. Jenner tested the hypothesis that infection induced lasting immunity by inoculating eight-year-old James Phipps, his gardener’s son, with cowpox. The boy caught the infection and recovered after a week. Jenner then inoculated him with smallpox. Fortunately for all involved, and for the world generally, James did not develop the infection. Jenner named the new procedure vaccination, after the Latin for cow, vacca.

Jenner reported his experiment to the Royal Society in London to a lukewarm response and demands for more proof. He then vaccinated other children, including his son, and the practice gradually took hold among other practitioners. In 1802, parliament awarded Jenner £10,000 in recognition of his work and a further £20,000 five years later. Variolation was banned in England in 1840 and vaccination with cowpox was made compulsory in 1853.4,5

References

- World Health Organisation. 10 facts on immunization. Updated March 2018 (accessed 26 February 2019).

- Smallpox. Centers for Disease Control and Prevention. Updated July 2017 (accessed 26 February 2019).

- Riedel S. Edward Jenner and the history of smallpox and vaccination. Proc (Bayl Univ Med Cent) 2005; 18: 21–5.

- About Edward Jenner. The Jenner Institute (accessed 26 February 2019).

- Edward Jenner (1749-1823). Brought to Life: Exploring the History of Medicine. Science Museum (accessed 26 February 2019).

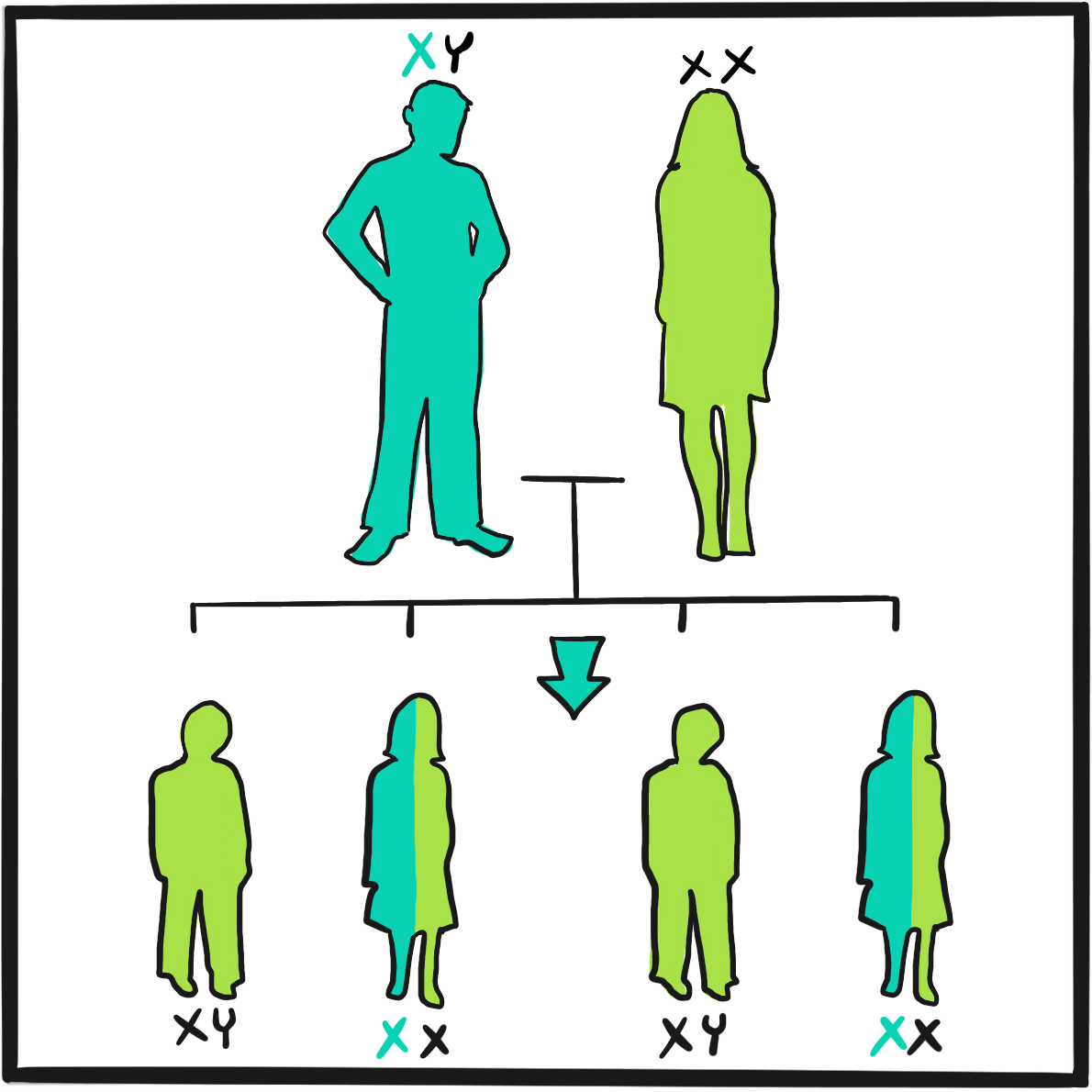

The first description of a bleeding disorder that affected only males is usually attributed to John C Otto (1774‒1844), an American physician working in Philadelphia, Pennsylvania.1 However, there seem to have been several reports documenting transmission within a family around that time:

…the anonymous obituarist of Isaac Zoll, writing in 1791 (qu. McKusick 1962), Consbruch in 1793 and 1810, Rave in 1796 (qu. Bullock and Fildes, 1911), and Otto in 1803 all described families in which males suffered abnormally prolonged post-traumatic bleeding. In the Zoll family, six brothers bled to death after minor injuries, but their half-siblings by a different mother were unaffected; in Consbruch’s family, a man and two of his sister’s sons were affected; Rave himself was affected, with his three brothers…2

Otto’s account is a model of scientific caution and precision. He begins by describing a woman named Smith of Plymouth, New Hampshire, who transmitted an ‘idiosyncrasy’ to her descendants, the Smiths and the Shepards: ‘If the least scratch is made on the skin of some of them, as mortal a hemorrhagy will eventually ensue as if the largest wound is inflicted.’ He goes on to describe how persistent bleeding, despite partial healing, rapidly disables the individual and ‘death, from mere debility, then soon closes the scene.’

Otto adds: ‘It is a surprising circumstance that the males only are subject to this strange affection, and that all of them are not liable to it… Although females are exempt, they are still capable of transmitting it to their male children…’

The Smiths were not the only family known to have the condition. Otto cites reports of similar cases described by several of his colleagues and, with great insight, adds: ‘When the cases shall become more numerous, it may perhaps be found that the female sex is not entirely exempt…’

The 1803 report includes a description of the many medicines offered as treatment, including bark, astringents (topically and internally), strong styptics and opiates. Only one worked for Otto’s patients: sulphate of soda (sodium sulphate) ‒, ‘An ordinary purging dose, administered two or three days in succession, generally stops them [haemorrhage]; and, by a more frequent repetition, is certain of producing this effect.’What the mechanism of action could be is a matter for speculation (possibly dehydration and hypotension, secondary to bleeding and purgation). Otto did not understand how this medicine acted but he did not dismiss the family’s experience of treatment, noting that it had worked too often for this to be coincidence and his ignorance was not a good reason to disbelieve them. And, as he pointed out, ‘In every case, however, a doubtful remedy is preferable to leaving the patient to his fate.’

References

- Otto JC. An account of an hemorrhagic disposition existing in certain families. Reprinted from The Medical Repository and Review of American Publications on Medicine, Surgery and the Auxiliary Branches of Science 1803, vol. 6, 1‒4. Clin Orthop Relat Res 1996; 4‒6 (accessed 26 February 2019).

- Ingram GI. The history of haemophilia. J Clin Pathol 1976; 29: 469‒79.

The Philadelphia physician John Otto is credited with the first record that haemophilia causes a bleeding disorder only in men. In 1803, he wrote:

‘It is a surprising circumstance that the males only are subject to this strange affection, and that all of them are not liable to it … Although females are exempt, they are still capable of transmitting it to their male children…’1

In the years that followed, accounts were published of several families affected by haemophilia in what was then the 13 British colonies on the east coast of the Americas. Among the most important was a lengthy description of the Appleton-Swain family by John Hay, a physician in Reading, Massachusetts.2 His interest was both professional and personal. Jonathan, his eldest son, married a descendant of Oliver Appleton, the first family member known to be affected, who had lived 100 years previously. Jonathan had eight children, three of whom were sons; at the time of Hay’s report, none had shown signs of exceptional bleeding but he feared that the youngest, at eight years old, ‘has the exact complexion of the bleeders’.

Hay’s description of the fate of the Appletons and Swains makes grim reading. Oliver Appleton died in old age from bleeding from a pressure sore and the urethra. The death of Oliver Swain, one of his grandsons, is recorded in full. Oliver was kicked by a horse, producing a leg wound three inches long and open to the bone. He initially bled profusely, then intermittently rapidly and slowly as physicians tried to stem the flow. He took 19 days to die, in severe pain and ‘in the greatest distress of body’, often lucid but sometimes delirious. Attempts to apply a ligature above the wound failed: ‘the blood has burst out above the ligatures, which caused extreme distress’. Oliver was attended by his brother Thomas, who was a doctor; he later died of a pulmonary haemorrhage. In all, 16 male members of the family were known to have haemophilia, of whom five were known to have died from bleeding.

Though he never explicitly states that Oliver Appleton’s female descendants were carriers, Hay’s painstaking account clearly identifies the women whose sons have haemophilia when they themselves had not apparent bleeding disorder. According to Bulloch and Fildes’ definitive 1911 review of what was known about haemophilia,3 Hay’s report does not provide definitive evidence of haemophilia in all the cases he described (for example, some deaths may not have been related to haemophilia and some family members had no recorded bleeding until adulthood). However, they conclude that his description ‘…bears the stamp of accurate observation. Hay was immediately acquainted with many of the cases and even related to them. Under these circumstances it is highly probable that he forbore to multiply instances.’

References

- Otto JC. An account of an hemorrhagic disposition existing in certain families. The Medical Repository and Review of American Publications on Medicine, Surgery and the Auxiliary Branches of Science 1803;6:1-4 (accessed 29 January 2019)

- Hay J. Account of a Remarkable Hæmorrhagic Disposition, Existing in Many Individuals of the Same Family. New Engl J Med 1813;2:221-5. (Reprinted in The London Medical Physical Journal 1815 Jan;33(191):9-12.

- Bulloch W, Fildes P. Treasury of Human Inheritance. Parts V and VI. Eugenics Laboratory Memoirs XII. University of London Francis Galton Laboratory for National Eugenics. Dulau & Co. London. 1911. (available at https://wellcomelibrary.org).

It may have been Freidrich Hopf, a German student, who first coined the word haemophiliain his doctoral thesis Über die Hämophilie [About Haemophilia] in 1828. Or perhaps it was his supervisor, Professor Schönlein, because some sources say that Hopf’s new word had actually been haemorrhaphilie and it was deemed too cumbersome. Whichever it was, the journey from conception to widespread use could not have been plain sailing, as other writers at the time suggested many alternatives.

Dr J Whickam Legg, a casualty physician at London’s St Bartholomew’s Hospital, provides a singular account of the acceptance of haemophiliaas the word of choice in his A Treatise on Haemophilia,Sometimes Called the Hereditary Haemorrhagic Diathesis (1872):1

‘In England this disease is often called the haemorrhagic diathesis, or tendency … On the continent, the disease is universally called haemophilia, or haemarrhaphilia. The former of these is the more commonly used; neither is good, but it is now too late to attempt to change.’

Earlier terms had included the German Bluterkrankheit, Blutsuchtand Blutungssucht and the French diathèse hémorrhagique, hémorrhagie constitutionelle and purpura constitionel.

Legg resorts to footnotes for grumpy elaboration:

‘Various other names have been proposed: haemorrhophilia, haematophilia, haemorrhagophilia, idiosyncrasia haemorrhagica and morbus haematicus. A name proposed by Uhde… is amychaemorrhagia… perhaps it is the worst yet suggested.

‘Haemorrhaphilia is the name used by Schönlein in his Vorlesungen (third ed. 1837). Virchow seems to think that Schönlein was the author of the word haemophilia. I am unable to say from my own observation by what writer the name was first used. Die Hämophilie is said to be the title of an inaugural dissertation by Hopf, at Würzburg, in 1828. The word is so barbarous and senseless that it is not wonderful that no one should be proud of it.’

So, with Legg’s grudging acceptance, the term haemophilia – by far the best of the options available ‒ has stuck.

Reference

Legg JW. A Treatise on Haemophilia Sometimes Called the Hereditary Haemorrhagic Diathesis.

London: HK Lewis, 1872 (accessed 26 February 2019).

In 1839, the eminent German surgeon Johann Dieffenbach developed a new procedure to treat strabismus (squint) that involved dividing the muscles around the eye, so as to reduce their effect on one side and rebalance the tension of opposing muscle groups. It was such a success that, in 1840, the London surgeon Samuel Lane considered it an unremarkable procedure to offer 11 year-old George Firmin. Unfortunately, George was a far from unremarkable child and the complications that followed his operation led to the first recorded use of blood transfusion to treat bleeding due to haemophilia.1

There was more bleeding than usual during surgery, Lane noted, but it subsided and George was able to walk home afterwards. But he soon started bleeding again and the family eventually called the surgeon out that evening, when they belatedly revealed that George had a history of life-threatening bleeding after minor trauma that had resulted in four hospital admissions.

On this occasion, George’s wound continued to bleed for six days and five nights ‘despite the usual remedies, both general and local’. These included applying gum tragacanth mixed with beaver hair to promote coagulation; this was initially successful but the clot formed was weak and soon displaced. By the fifth day, George had lost so much blood that he was unconscious, vomiting and fitting. Lane resolved the boy would not die and he would attempt a blood transfusion at 7pm on the sixth day.

Transfusion was, by contrast with Dieffenbach’s procedure, a rare event at the time due to its poor track record: early attempts at transfusing blood from lambs and calves had, unsurprisingly, proved fatal. Fortunately for George, the obstetrician James Blundell had realised the importance of using human blood and had carried out the first successful transfusion in 1829. He personally explained the procedure to Lane.

Lane describes the procedure in great detail. He collected blood from ‘a stout, healthy young woman’ into a funnel which, via a stop-cock, fed directly into a syringe. Initially the donated blood clotted quickly, a problem solved by excluding air from the syringe chamber. Blood was infused and the syringe rewashed five times in total, after which about half a pint of blood had been donated. Over the next one to two hours, George sat up in bed and drank a glass of wine and water from his own hand. ‘The contrast between life and death could scarcely be greater,’ Lane commented. George recovered fully over the next three weeks (and his strabismus was corrected).

It’s possible that blood transfusion may have been proposed as a treatment for bleeding due to haemophilia as early as 1832 by the German physician Johan Schönlein (who gave his name to Henoch-Schönlein purpura),2but Lane’s is the first definitive account. He and George were fortunate that the donor had compatible blood and that bacterial contamination was somehow averted. Such problems brought a temporary stop to blood transfusion until, at the turn of the century, blood groups were discovered. Subsequent research revealed that blood fractions contained the necessary clotting factors and, in the mid-1930s, the therapeutic potential of plasma precipitate was demonstrated.

References

- Lane S. Haemorrhagic diathesis. Successful transfusion of blood. Lancet 1840; 35: 185-8.

- Schramm W. The history of haemophilia – a short review. Thromb Res 2014; 134 (Suppl) :S4–S9. doi: 10.1016/j.thromres.2013.10.020

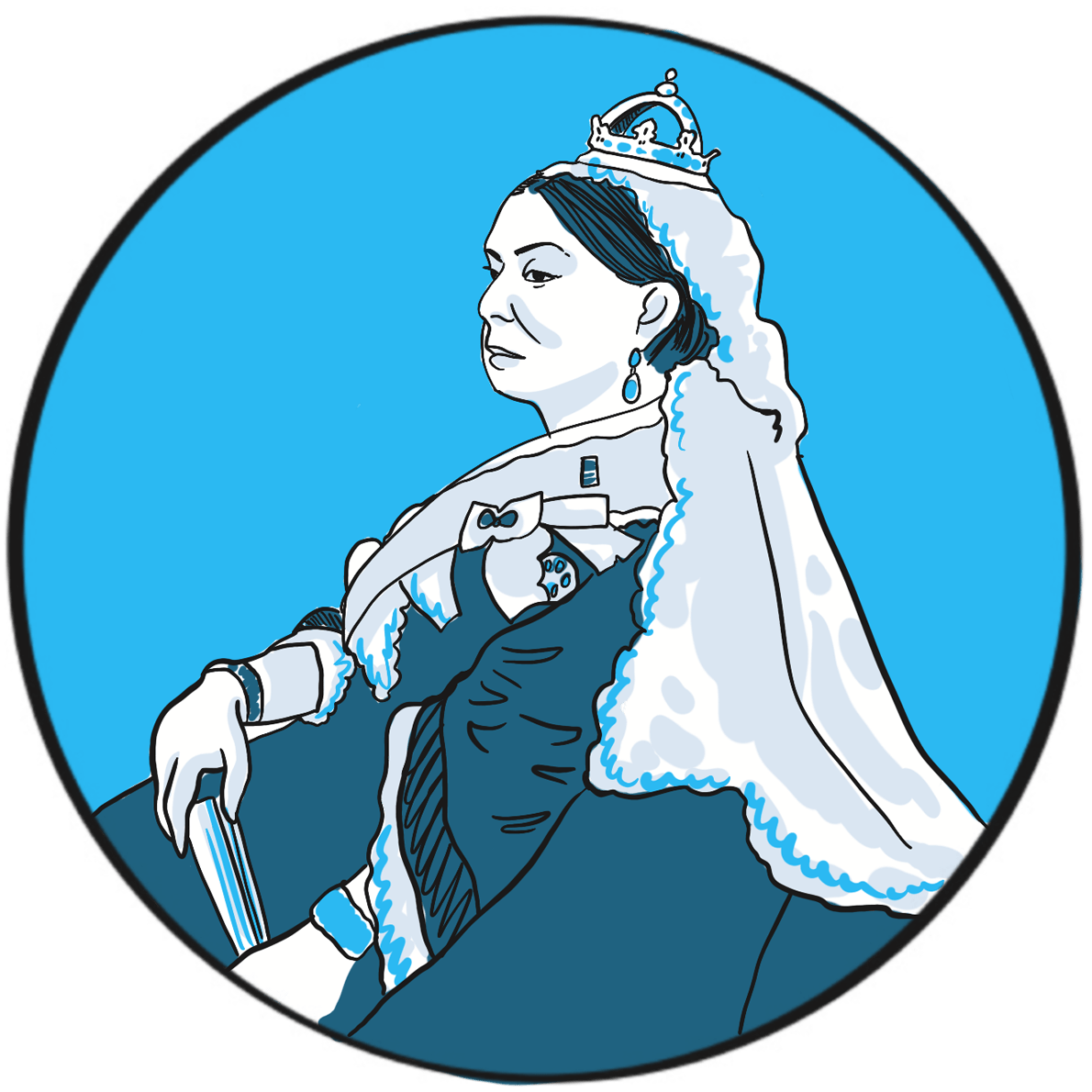

Haemophilia was unusually prevalent among European royalty in the 19thcentury ‒something that is attributed to its emergence in Queen Victoria’s family and the practice of arranging marriages between royal houses to secure power and influence.

Prince Leopold George Duncan Albert, the fourth of Victoria’s nine children, was born on 7 April 1853. He was diagnosed with haemophilia in childhood and was reputedly a delicate child. He died in 1884, probably of a cerebral haemorrhage following a fall. At least two of Victoria’s daughters, Alice and Beatrice, were haemophilia carriers, as was Leopold’s daughter Alice. Their marriages and those of their children resulted in the emergence of haemophilia in the Spanish and Russian royal families. In total, nine or ten of Victoria’s male descendants were known to have had haemophilia; the true number of women who were carriers is not known as some died without having children.

Victorian society did not deal with the issue well. Britain in the mid-19thcentury was, like much of Europe, experiencing revolutionary fervour. In 1848, the Queen was sent to the Isle of Wight to protect her from the Chartist marchers in London. During her reign, she survived six assassination attempts. Haemophilia was seen as a weakness by an establishment that could not afford to yield an inch to republicanism.

In 1868, the medical and lay press carried reports of Leopold’s bleeding episodes. One commentator suggests ‘the disease was an embarrassment to the monarchy, who generally kept as quiet as possible about it …’1 With careful media management, some papers reported public sympathy for the family’s plight, but the republican press ‘argued that the disaffected working classes resented the hyperbole connecting the health of royal individuals with the political future of the entire nation.’1 The ensuing political manoeuvring meant that the public acquired an unusually deep understanding of ‘the royal disease’ ‒but arguably one that, by virtue of turning haemophilia into a political football, may have contributed to the stigmatisation that people experience to this day.

It is believed that haemophilia among British royalty emerged with Victoria’s father, Prince Edward, via a random mutation. In 2009, gene sequencing of bone samples of the Russian royal family identified the IVS3-3A>G mutation in the factor IX gene; this results in a causal substitution in the splice acceptor site of exon 4 ‒something that would cause severe haemophilia B.2,3

References

- Rushton AR. Leopold: the “bleeder prince” and public knowledge about hemophilia in Victorian Britain. J Hist Med Allied Sci 2012; 67: 457‒90. doi: 10.1093/jhmas/jrr029.

- Rogaev EI, Grigorenko AP, Faskhutdinova G, et al. Genotype analysis identifies the cause of the “royal disease”. Science 2009; 326: 817. doi: 10.1126/science.1180660.

- Green P. The ‘Royal disease’. J Thromb Haemost 2010; 8: 2214–5. doi: 10.1111/j.1538-7836.2010.03999.x.

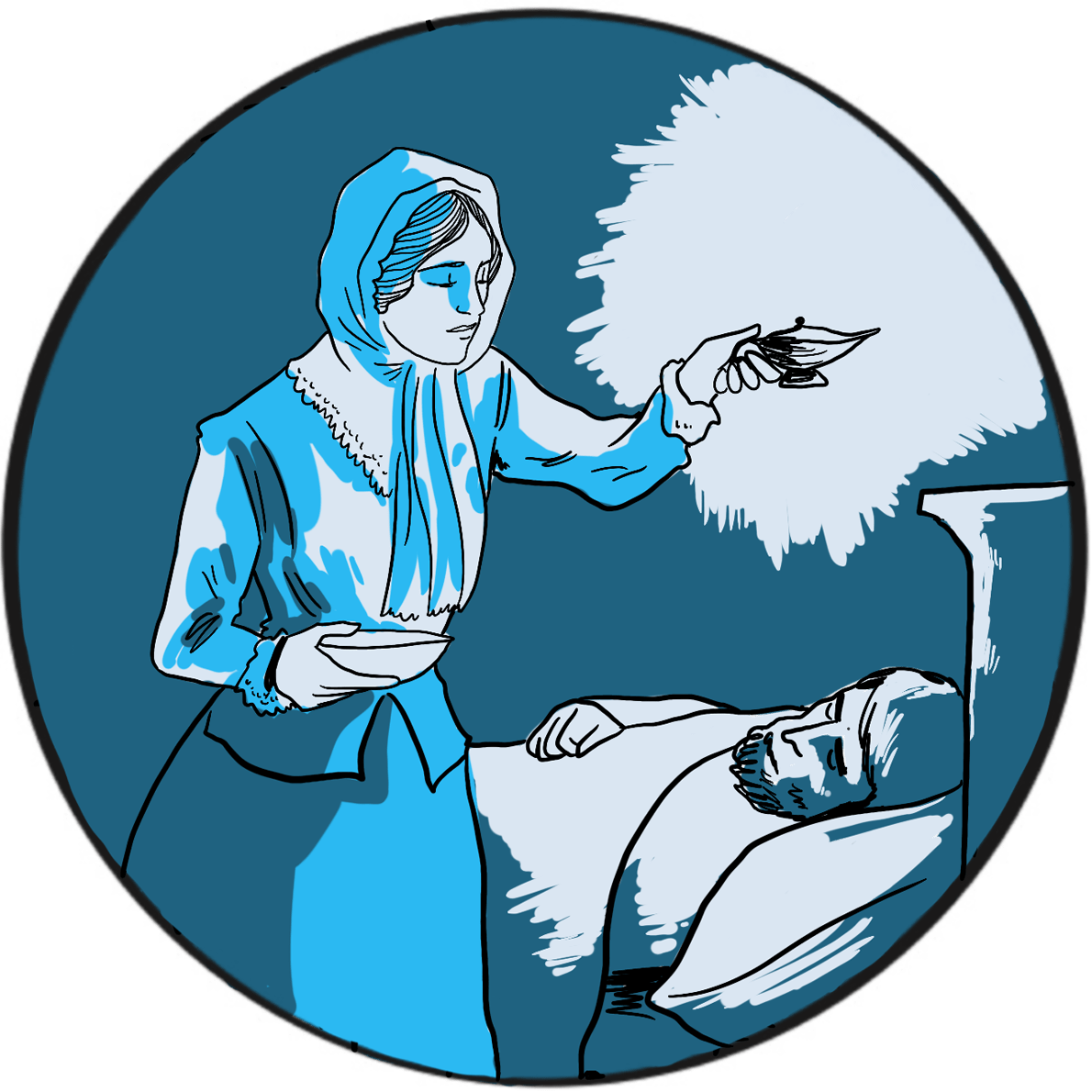

Florence Nightingale (1820–1910) – known as the Lady of the Lamp, a British icon, feminist, pioneer and founder of modern nursing ‒is widely regarded as a compassionate and brave hero in a time of exceptional brutality and suffering, and as a woman who achieved change in a world dominated by men. She abandoned a relatively privileged life against her family’s wishes to pursue a career as a nurse, obtaining the post of Superintendent at the Establishment for Gentlewomen During Illness in London’s Harley Street. In 1854, in response to public pressure, the Minister for War appointed her to lead a team of volunteer nurses to improve the dreadful state of military hospitals in the Crimea. Working at the barracks hospital in Scutari, Turkey, her reputation as a champion of the wounded and dying soldiers was forged.

But Florence Nightingale’s achievements in other fields should first be acknowledged. On returning to Britain, she successfully lobbied the Queen to set up a royal commission into the insanitary conditions suffered by the British Army. She was an eminent statistician and one of the first to present dry facts as accessible diagrams easily understood by the general public. With charitable funds raised in her name, she supported training for nurses at several London hospitals and was instrumental in transforming the profession’s mission and public standing. Her achievements were recognised with several public honours, and in 1907 she became the first women to be awarded the Order of Merit.

In 1858, Nightingale submitted to the Secretary of State for War her Notes on Matters Affecting the Health, Efficiency, and Hospital Administration of the British Army, Founded Chiefly on Experience of the Late War’.1With the benefit of hindsight, she summarises her evidence to the royal commission on the appalling sanitary conditions the soldiers endured during the Peninsular (1808–1814) and Crimean Wars (1853‒1856) and in army hospitals in Britain. It was never published but she circulated it privately. Her analysis showed that, on average, 39% of soldiers were sick at any one time. At one phase of the Crimean War, mortality due to disease was 73%. She wrote:

‘But if we go through the whole Crimean force, Regiment by Regiment, and mark what the Admissions into Scutari Hospitals were, and what the Deaths there during those fatal seven months, we shall see how much of the mortality was due in the Crimean case also to the frightful state of the General Hospitals at Scutari; how much it depended upon the number which each Regiment was unfortunately enabled to send to these pest-houses.’

Nightingale’s role at Scutari was primarily administrative, which probably explains her attention to effective provisioning and supply. She recognised that the sanitary conditions at the hospital were a contributory factor to the death rate, stating ‘want of ventilation, want of drainage, bad water, and foul air… nobody seems to have thought of trying the effect of pure air’. Despite Nightingale’s best efforts, mortality remained stubbornly high and it was not until a Government Sanitary Commission was sent to inspect the hospital that it was found to be built on a sewer. Nightingale had already noted how liquid faeces from patients with dysentery ran over the floors. When the sewer was flushed out and ventilation improved, the death toll began to fall.

Florence Nightingale is therefore widely seen as the pioneer who recognised that infection was a major cause of death during hospital care ‒but some say that her account of the importance of hygiene and sanitation was a late conversion. At Scutari, she had been more concerned with nutrition and the poor general health of soldiers, so that ‘She had helped them to die in cleaner surroundings and greater comfort, but she had not saved their lives.’2

During an inspection tour of hospitals in the Crimea, she became severely ill with brucellosis but insisted on continuing her task. The Queen was kept informed and there were prayers for her recovery. The Times christened her ‘The Lady of the Lamp’ and a ‘ministering angel’, capturing the sentiment of the day and defining her legacy. How Florence Nightingale came to be a powerful advocate of good hygiene and sanitation is perhaps beside the point. Her impact at the time was immense and her influence on the nursing profession is apparent over 150 years later.

Reference

- Nightingale F. Notes on Matters Affecting the Health, Efficiency, and Hospital Administration of the British Army, Founded Chiefly on Experience of the Late War. London: Harrison & Sons, 1858 (accessed March 2019).

- Bostridge M. Florence Nightingale: the Lady with the Lamp. Last updated February 2011. BBC History (accessed March 2019).

Further reading

McDonald L. Florence Nightingale, Nursing, and Health Care Today. New York: Springer Publishing,

2017. ISBN: 0826155588.

Baly ME, Matthew HCG. Florence Nightingale. Oxford Dictionary of National Biography. Version 06

January 2011. doi: 10.1093/ref:odnb/35241 (accessed March 2019).

In the mid-19th century, the prevailing Western scientific opinion was that life was created spontaneously: put some nutrient broth in an open glass flask and, within a week or so, the mixture becomes cloudy with microbial growth that has generated spontaneously.

French chemist Louis Pasteur (1822–1895) did not accept this theory. In 1859, in the face of scepticism from the medical establishment, he ingeniously demonstrated that the source of microbes was contamination from the local environment.

This discovery was put to a practical test in 1863 when Napoleon III tasked Pasteur with solving a problem that was costing the French wine industry dearly: why did wine go off after being bottled? The principle of preserving food by boiling had been known for centuries, but this was not feasible for wine because it destroyed the flavour and evaporated the alcohol. Pasteur was able to show that it was necessary only to heat the wine to 50–60 degrees Celsius for a brief period to kill the causative organisms without significantly affecting the flavour. He had similar success with beer, and a grateful nation christened the process of heat treatment Pasteurisation in his honour.

Between 1865 and 1870, Pasteur’s research demonstrated that a blight threatening the French silkworm industry was due to an infection transmitted by parasites. On his advice, the infected worms were destroyed and another industry was saved. His subsequent work built on Jenner’s discovery of the principle of immunisation, developing attenuated vaccines against anthrax and rabies.

Pasteur carried out his work in the face of considerable personal tragedy: in 1868, a stroke left him partially paralysed and, between 1859 and 1866, he lost two daughters to typhoid and a third to cancer. The public health benefits of his treatment process began to be recognised by the early 1900s, but in the UK many people still contracted serious milk-borne infections. As late as 1943, it was known that 5%‒10% of the milk supply was contaminated by tuberculosis bacilli and 20%‒40% contained Brucella abortus. Many vociferously opposed milk pasteurisation because they thought its flavour and nutritional quality suffered. However, the gradual introduction of voluntary standards resulted in most of the milk supplied (to London, at least) being pasteurised by 1950.2

The UK still consumes more fresh milk than many other European countries, where heat-treated long-life milk is more acceptable. It is still legal to sell raw milk, provided it comes from herds that are registered free of tuberculosis and brucellosis and are regularly monitored, and only through designated outlets or direct online sales.3

References

- Wilson GS. The pasteurization of milk. Br Med J 1943; 1: 261‒2.

- Atkins PJ. The long genealogy of quality in the British drinking-milk sector. Historia Agraria 2017; 73: 35‒58. doi: 10.26882/HistAgrar.073E01a (accessed March 2019)

- Food Standards Agency. Raw drinking milk: advice on drinking raw milk and the safety regulations in place concerning its sale. Last updated September 2018 (accessed March 2019).

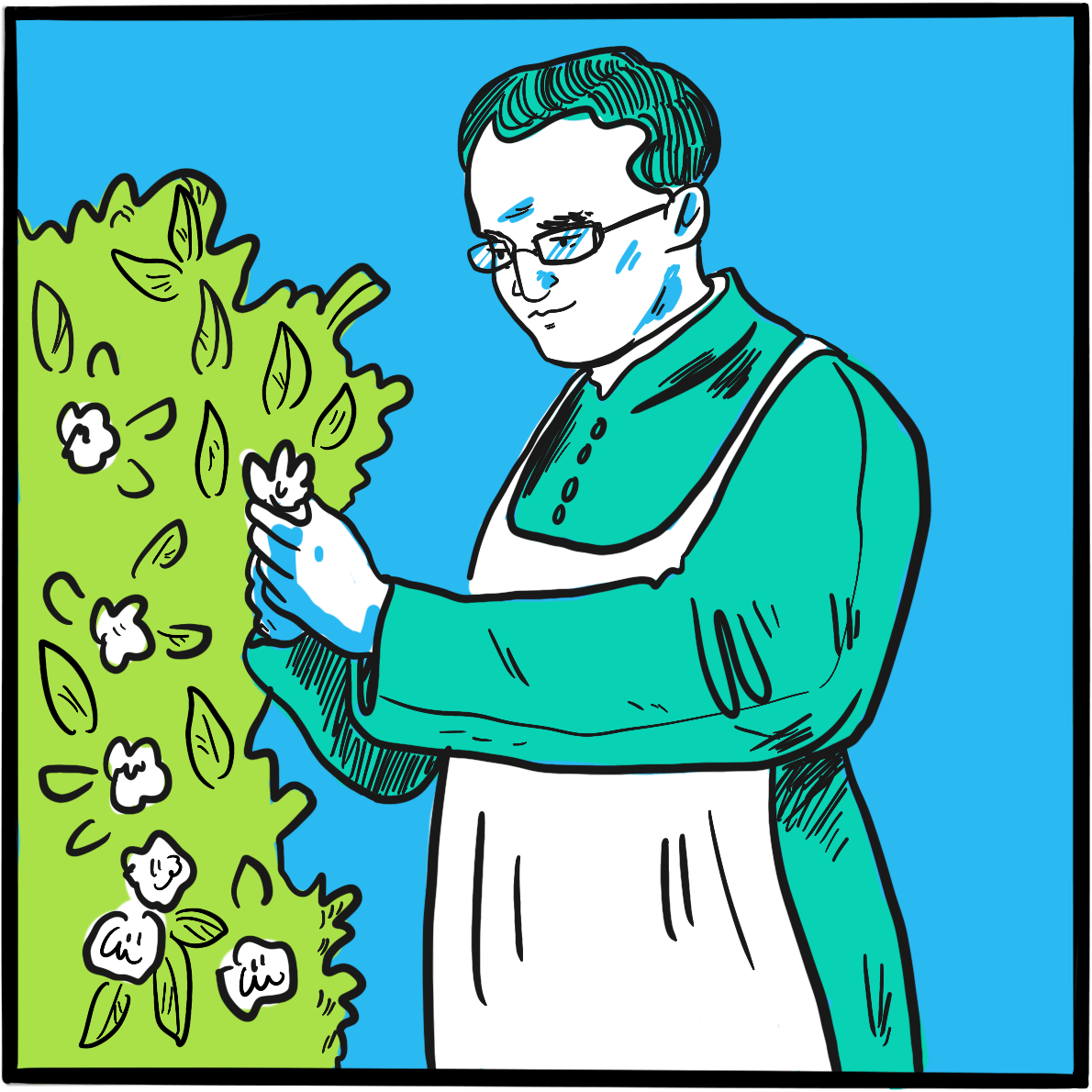

In 1865, an Austrian monk presented the findings of his eight-year research project to the Society for the Study of the Natural Sciences in Brünn (Brno). In Experiments in Plant Hybridization, he described how he had grown approximately 10,000 pea plants and, from his observations, deduced how their characteristics passed between generations.

Gregor Mendel (1822‒1884) is now considered to be the father of modern genetics, but his research challenged scientific orthodoxy, provoked controversy and brought accusations of fraud. He did not live to see his work recognised by the scientific community.

Mendel was born in Moravia, then part of Austria and now in the Czech Republic. He was academically bright but his health was poor. After obtaining a degree in philosophy, in 1843 he joined a monastery, where he was able to continue his education. He studied agriculture and viniculture, and was ordained four years later. However, Mendel was unsuited to pastoral duties and failed to qualify as a teacher, so became a part-time assistant teacher. This allowed him the time and resources to pursue his scientific work and, between 1857 and 1864, he completed his study of hybridisation.

Accepted wisdom at the time was that heredity was the result of a blending process that diluted parental traits. Mendel observed that seven characteristics of peas showed no signs of dilution at all:

‘The angular wrinkled form of the seed, the green colour of the albumen, the white colour of the seed-coats and the flowers, the constrictions of the pods, the yellow colour of the unripe pod, of the stalk, of the calyx, and of the leaf venation, the umbel-like form of the inflorescent, and the dwarfed stem, all reappear in the numerical proportion given, without any essential alteration. Transitional forms were not observed in any experiment…’

Instead, he noticed that, in the first generation of plants, these characteristics appeared in the ratio three to one, labelling the prevailing characteristics ‘dominant’ and the latent ones ‘recessive’. Mendel had shown that inheritance depended on the combination of two unequally expressed genes, rather than a blend, thereby establishing two principles of heredity: the law of segregation (each gamete carries only one allele for each gene) and the law of independent assortment (genes segregate independently).

Mendel’s paper was largely ignored. Darwin’s On the Origin of Species by Means of Natural Selection, or the Preservation of Favoured Races in the Struggle for Life had been published in 1859, but it seems the potential link between the two theories was not made. European botanists confirmed Mendel’s work in 1900, but it was several more decades before it was accepted.

After being elected to succeed the abbot at the monastery in 1868, Mendel spent most of his time on administration, in particular opposing a new tax. He published only one more scientific paper, this time on hawkweed. However, it did not confirm his original hypothesis (because this plant reproduces asexually, generating clones) and was completely ignored. Mendel died, in considerable discomfort, of Bright’s disease (chronic nephritis), aged 62.

Further reading

Dunn PM. Gregor Mendel, OSA (1822–1884), founder of scientific genetics. Arch Dis Child Fetal Neonatal Ed 2003; 88: F537–9.

Bicknell R, Catanach A, Hand M, Koltunow A. Seeds of doubt: Mendel’s choice of Hieracium to study inheritance, a case of right plant, wrong trait. Theor Appl Genet 2016; 129: 2253‒66.

Gayon J. From Mendel to epigenetics: History of genetics. C R Biol 2016; 339: 225‒30. doi: 10.1016/j.crvi.2016.05.009.

It is rare that innovators develop their radical ideas in isolation. One example would be Gregor Mendel, who first described the pattern of heredity ‒and, for his trouble, was ignored by the scientific community until they caught up 35 years later. The development of the telephone is a case of the opposite: but for a phrase or two omitted from a patent application, the inventor uppermost in the public consciousness would be Antonio Meucci rather than Alexander Graham Bell. Both were working on electronic voice transmission; Bell got the patent.

Born in Scotland in 1847, Bell migrated to Canada in 1870 and moved to Boston in the United States the following year. He came from a family of elocution experts with a background in teaching deaf people a system of ‘visible speech’, and had been working on developing mechanical speech devices. He realised that sound waves could be converted into a fluctuating electric current which, if transmitted to a receiver, could be turned back into sound. By 1875 he had developed a basic device that could convert electricity into sound.

Bell had a powerful incentive to succeed. His patron was the lawyer and politician Gardiner Hubbard, whose daughter Mabel was deaf. Bell taught her to lip read and speak, then fell in love with her. Hubbard refused his consent to marriage until Bell had perfected his electrical speaking device. The prospective father-in-law was also an astute businessman and in 1876 filed a patent to protect the work. Crucially, this covered the electromagnetic transmission of vocal sound.

On 10 March 1876, Bell summoned his assistant from an adjacent room, shouting the words “Mr Watson, come here, I want to see you“, through his new telephone. The following year, Bell, Hubbard, Watson and others founded the Bell Telephone Company ‒Bell and Mabel were married two days later.

Coincidentally, an electrical engineer named Elisha Gray had also filed a patent for a telephone device on the same day, a matter of hours after that filed by Hubbard and at the same patent office. This prompted speculation that Bell’s application was granted only after some fancy footwork and resulted in the matter coming before the courts. The case was settled in Bell’s favour.

More seriously, an Italian American named Antonio Meucci had filed a temporary patent application in 1871 for his ‘Sound Telegraph’, a device he had been working on since 1849. He could not afford the fee for a full application and made no mention of electromagnetic voice transmission. The application expired in 1874 and he did not have the money to renew it. Meucci had shared a workshop with Bell in the 1870s. He sued Bell but died penniless in 1889 before the action was concluded. He was formally recognised by the US Congress as the true inventor of the telephone in 2002.

Bell fought several more court battles to defend his patent, eventually joining one litigant, Thomas Edison, to form the United Telephone Company. He continued his research in communication and techniques for teaching speech to the deaf, working with Helen Keller at one stage. He was one of the founding members of the National Geographic Society in 1888, and served as its second president from 1898 to 1903. He died on 2 August 1922.

Further reading

Alexander Graham Bell’s box telephone. National Museums Scotland. Available from https://www.nms.ac.uk/explore-our-collections/stories/science-and-technology/alexander-graham-bell (accessed March 2019).

Smith C. The history of the telephone: Six pioneers who transformed the world of communications. British Telecom. Updated 10 March 2018 (accessed March 2019).

Antonio Meucci. Famous Scientists: The Art of Genius (accessed March 2019).

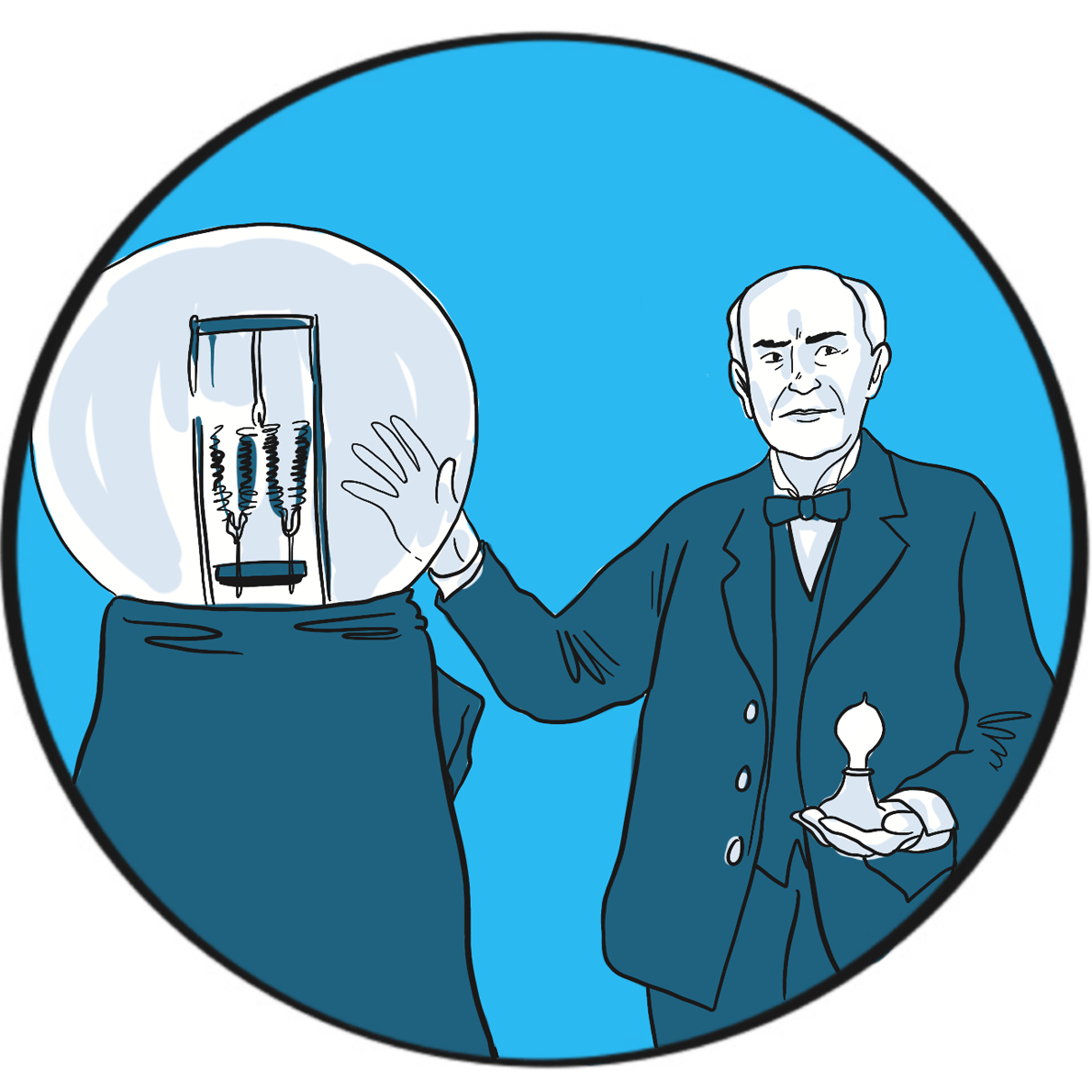

If you ever need a quote, Thomas Edison (1847‒1931) is your man. Google his name and up pop some very wise words, including:

I have not failed. I’ve just found 10,000 ways that won’t work

and:

Genius is one percent inspiration and ninety-nine percent perspiration

Those words certainly embody his life. Edison is described as America’s greatest and most brilliant inventor. He was certainly among the most productive, holding more than one thousand US patents. He is credited with inventing the phonograph (1877), the carbon telephone transmitter (used in telephones until the 1980s), a system for distributing electricity (though he was misguided in his criticism of alternating current), fluoroscopy (late 1890s), and was instrumental in developing the kinetoscope (1891) and an early battery (1910). But he is best known for developing the first commercially feasible incandescent electric light bulb in 1879. He achieved all this despite being profoundly deaf – something he said allowed him to concentrate.

Edison wasn’t the only inventor trying to develop a light bulb. However, he was the first to create a filament that could, when a voltage was applied to it, produce sufficient light without quickly burning up. His researchers tested thousands of carbonised plant materials and finally came upon cotton thread. In the high-quality vacuum he was able to create in bulbs drawn in his own glass blowing shed, the thread glowed for 15 hours before burning up. He refined the design until the bulb had a serviceable life, and then patented it in the United States.

As is the way with competitive inventors, the patent was disputed by a rival firm and it took six years for Edison to establish its validity. In turn, Edison sued the English inventor Joseph Swan, claiming infringement, but the two agreed to avoid a court battle by uniting their companies to form the Edison & Swan United Electric Light Company in Britain.

Edison didn’t invent the light bulb, but he created the first design that did the job. He then improved it again by using a carbonised bamboo filament that last 1,200 hours. The familiar modern version, with a spiral tungsten filament in a bulb filled with an inert gas, was introduced in 1913.

Further reading

Edison’s lightbulb. The Franklin Institute (accessed March 2019).

Lighting a revolution: U.S. patent 223,898, Thomas Edison’s incandescent lamp. Smithsonian Institution (accessed March 2019).

Thomas Edison. Wikipedia. Updated 11 March 2019 (accessed March 2019).

Royal jubilees are not entirely rare but certainly not common. There have been 66 monarchs of England or Great Britain since Athelstan in the tenth century, of whom six reigned for at least 50 years: Henry III, Edward II, James VI and I, George III, Victoria and Elizabeth II. Jubilees are known to have been celebrated in a significant way only since George III, who reigned from 1760 to 1820.

Victoria’s golden jubilee was celebrated on 20 and 21 June 1887. The official website of the royal family records that her day began with a quiet breakfast under the trees at Frogmore, the royal burial ground in Berkshire where her consort Prince Albert was buried. That evening, a royal banquet was attended by 50 foreign kings and princes, and the governing heads of Britain’s overseas colonies and dominions. The next day, blessed by unusually good weather, Victoria travelled to Westminster Abbey in an open carriage, greeted by cheering crowds all the way. She wrote in her journal:

‘No one ever, I believe, has met with such an ovation as was given to me, passing through those 6 miles of streets … The cheering was quite deafening & every face seemed to be filled with real joy. I was much moved and gratified.’1

The next day’s celebrations included meeting 10,000 schoolchildren in the rain on London’s Constitution Hill, followed by a civic reception in Slough.

The jubilee was marked by the kind of short-lived philanthropy that has been a feature of public celebrations over the years, the expense divided between the royal household and the state. Street feasts were provided for 400,000 of London’s poorest and another 100,000 in Manchester. There were firework displays and St Paul’s cathedral was illuminated by a son et lumière(sound and light) show; Nottingham, Bradford and Hull were awarded city status. Free ale and pipe tobacco were provided by the tea magnate Sir Thomas Lipton and the pubs stayed open until 2.30 am.

The golden jubilee marked the height of British global power and, as ever, no opportunity to promote the national interest could be ignored. Victoria had been crowned Empress of India in 1876 and the celebrations emphasised Britain’s interests in that country, with many Indian princes in attendance and the queen’s carriage led by an escort of Indian cavalry. But, although Victoria was a strong supporter of the empire and jingoism was common in political and social life, historians argue that Britain as a whole was not in the grip of imperialist fervour.2

The event also marked Victoria’s return to public life. She had rarely been seen in public since Albert’s death in 1861, after which wore only black (even at the jubilee), and a significant republican sentiment had emerged. However, Britain’s growing economic and imperial success bolstered her popularity in the 1870s and support for the queen was genuine among many people on the day. She celebrated her diamond jubilee with similar pomp and circumstance in 1897.

Victoria reigned until her death in 1901, having been head of state for 64 years during a remarkable century characterised by exceptional change.

References

- A history of jubilees. The Royal Household (accessed March 2019).

- Tombs R. Englishness in the English century. In: The English and their History. London, Penguin, 2014.

Further reading

Victoria (r. 1837-1901). The Royal Household (accessed March 2019).

Sully A. Queen Victoria and Britain’s first diamond jubilee. BBC News. 22 May 2012 (accessed March

2019).

Queen Victoria’s golden jubilee. Making Britain. Discover how South Asians shaped the nation,

1870-1950. The Open University (accessed March 2019).

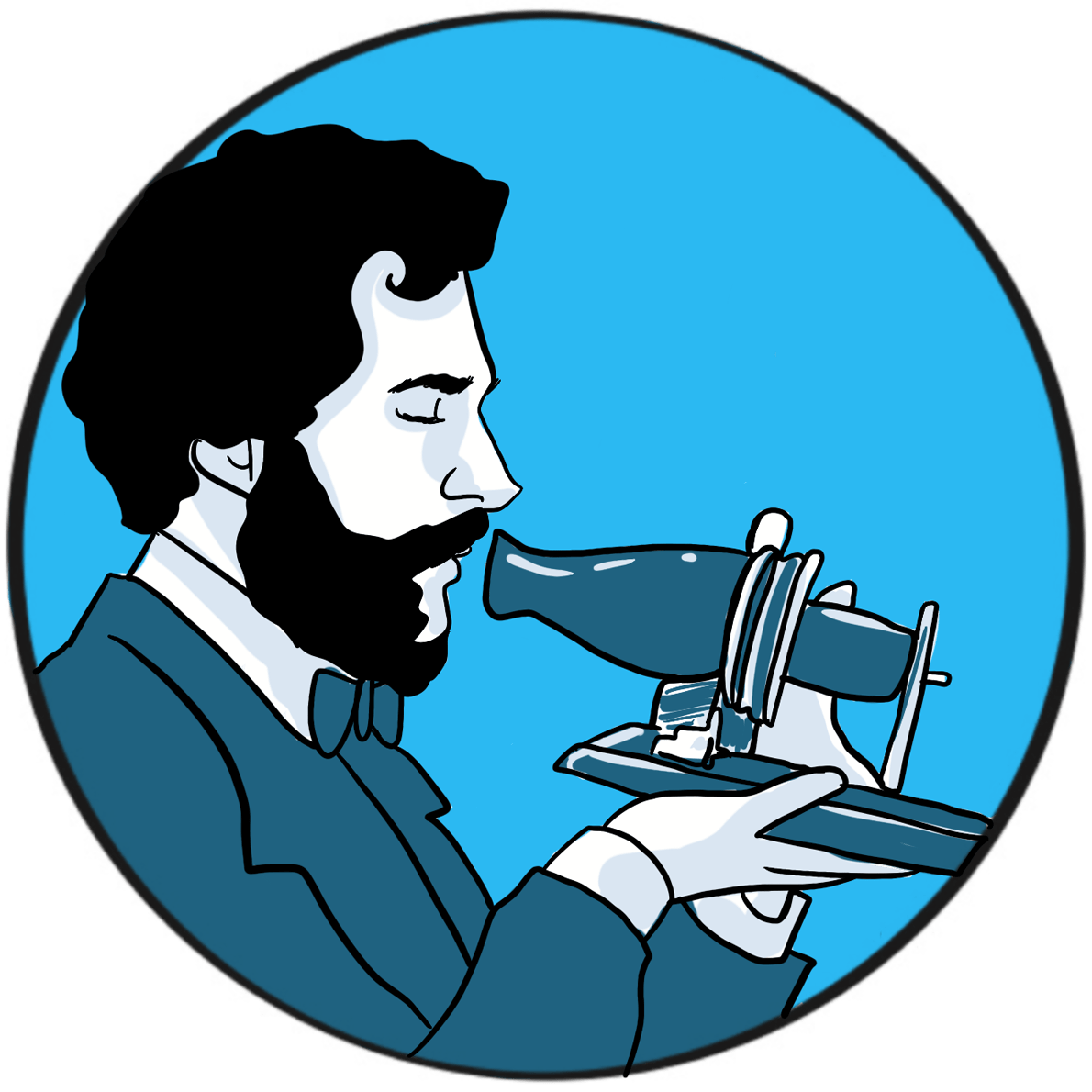

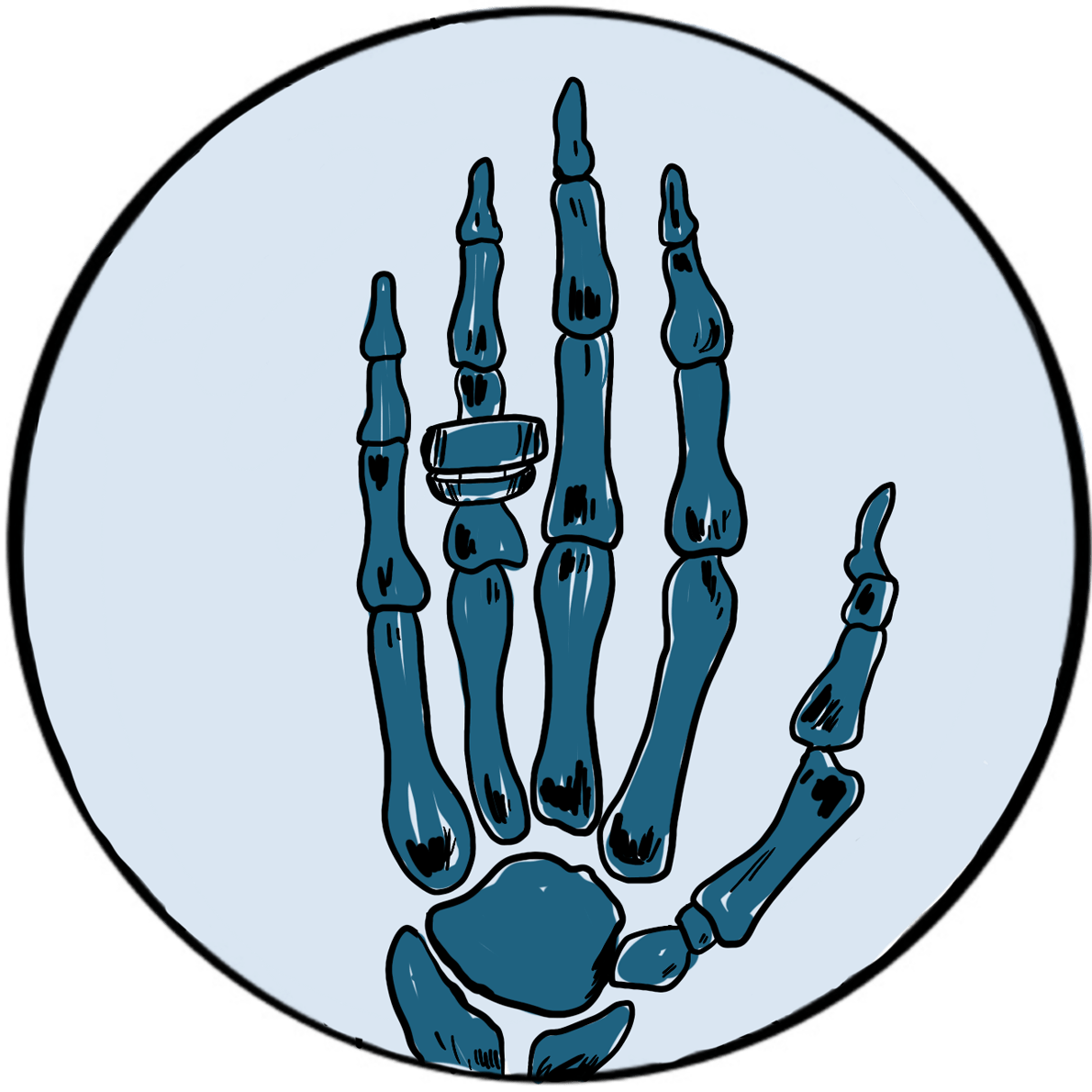

The German physicist Wilhelm Conrad Röntgen was awarded the 1901 Nobel prize in physics for his discovery of x-rays. His breakthrough arose during research into the properties of the recently discovered cathode rays ‒the technology that would eventually give the world television.

Röntgen was born in the Lower Rhine Province of Germany in 1845; his family moved to the Netherlands when he was three. Following a largely unremarkable school career – marked only by an aptitude for making mechanical devices ‒he studied physics at university, where his academic star began to shine. He was strongly influenced by the work of August Klundt and Rudolf Clausius on thermodynamics and light; in 1869, after graduating with a PhD from the University of Zurich, Röntgen worked with Klundt for several years.

Several professorships in European cities followed: Röntgen was, it seems, head-hunted by the top universities of the day until, in 1900, he accepted the Chair of Physics at the University of Munich, where he remained for the rest of his career.

During the late 19th century, several scientists had been investigating the rays emitted by the cathode when an electrical current passed through a gas held at low pressure in a glass discharge tube. It was known that the rays could be deflected by electrical and magnetic fields, and they were subsequently found to have the properties of subatomic particles later known as electrons. The cathode ray tube, which utilises a vacuum rather than a low pressure gas, provided the image in the first television in 1926 and was used in sets until the advent of plasma, LCD and OLED screens in the early 21stcentury.

Röntgenobserved that if the discharge tube was sealed to exclude light, a plate coated with barium platinocyanide (a compound that emits light when exposed to radiation) fluoresced when placed in the path of the rays in a darkened room. He subsequently showed that putting various objects in the path of the rays attenuated the fluorescence to varying degrees but did not prevent it. He then placed his wife’s hand between the discharge tube and a photographic plate; when this was developed, he had an image of the hand, with dark shadows cast by the bones, faint shadows from the flesh and the clear outline of a ring. This was the first Röntgenogram. When his wife saw the image, she is said to have proclaimed “I have seen my own death!”.

Although he realised that the rays producing these images were not the same as the cathode rays in the discharge tube, Röntgen was uncertain of their nature and christened them x-rays. They were later shown by Max von Laue to be electromagnetic waves with properties similar to light ‒ a discovery for which he was awarded the Nobel Prize for Physics in 1914.

X-rays were used as a diagnostic tool at the turn of the century, though machines were cumbersome and unreliable. In 1911, US President Theodore Roosevelt was shot in an unsuccessful assassination attempt; x-rays were used to locate the bullet, showing that the risk of moving it was greater than leaving it be.

Although he was showered with honours for his research, Röntgen is said to have been a modest and reticent person; he was colour blind and nearly blind in one eye. Preferring to work alone, he had built much of his apparatus himself. He gave the money from his Nobel prize to his university. He died in 1923 from bowel cancer.

Further reading

Wilhelm Conrad Röntgen: biographical. The Nobel Prize (accessed March 2019).

Gunderman RB. X-Ray Vision: The Evolution of Medical Imaging and Its Human Significance. Oxford University Press, 2013. ISBN-13: 978-0199976232.

Howell JD. Early clinical use of the x-ray. Trans Am Clin Climatol Assoc 2016; 127: 341‒9.

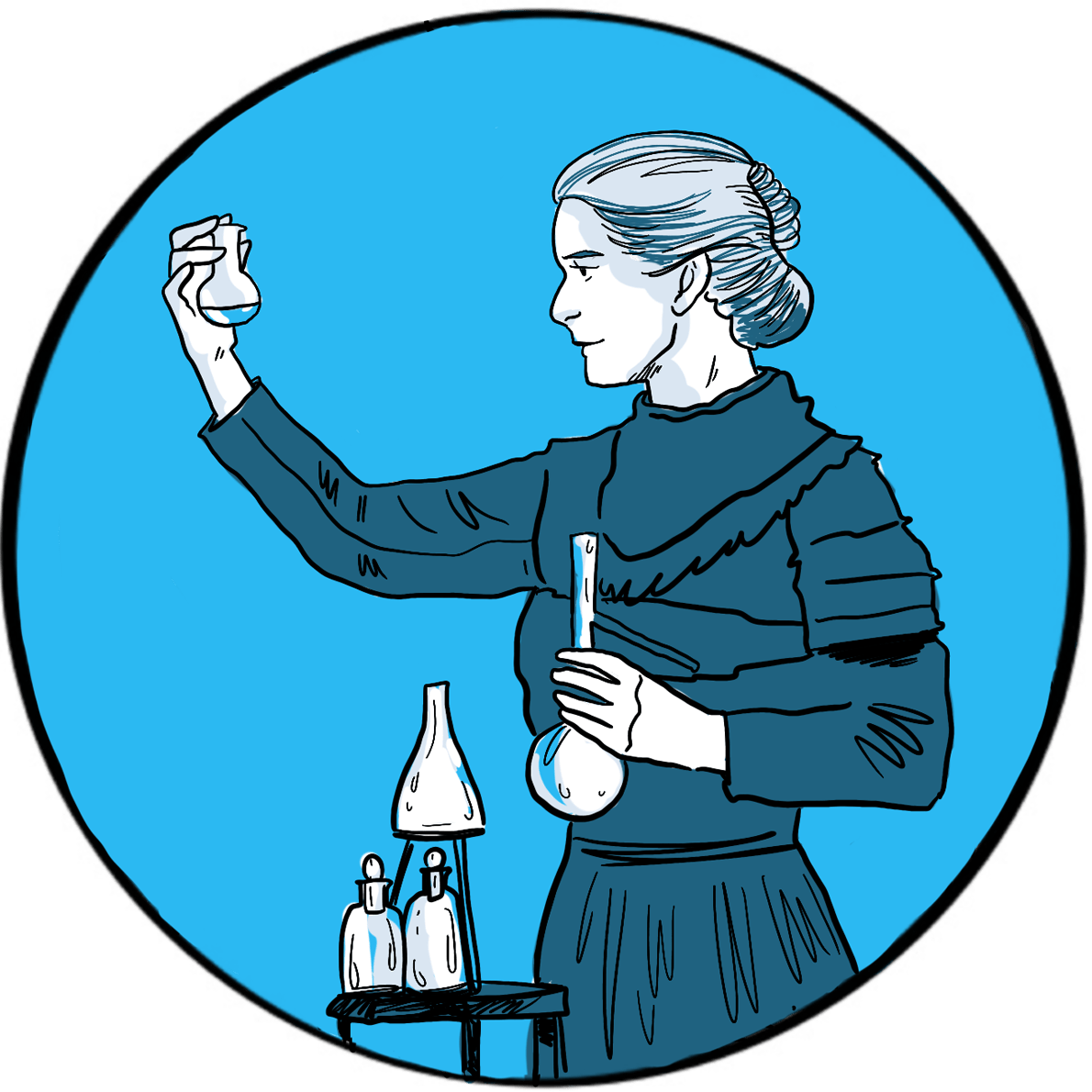

In 1896, the French physicist Henri Becquerel was one of many scientists working on Röntgen’s newly discovered x-rays. Having inherited some uranium salts from his father, he was trying to determine whether there was a link between the naturally phosphorescent substance and x-rays. He noted that uranium was capable of fogging a photographic plate when placed nearby, suggesting that the salts emitted rays, and went on to show that (unlike x-rays) these rays could be deflected by an electromagnetic field and could ionise gas. Becquerel had discovered spontaneous radioactivity, for which he was awarded the 1903 Nobel prize in physics. The cause of his death in 1908 is unknown, although he is believed to have experienced serious burns from handling uranium.

Becquerel shared his Nobel prize with Marie and Pierre Curie for their work on spontaneous radiation. Pierre was born in Paris in 1859. After enrolling at the Sorbonne, he rose through the ranks of academia to become Professor of General Physics in the Faculty of Sciences. Marie was born in Poland in 1867 and had a distinguished academic career, joining the Sorbonne in 1891. They were married in 1895.

The Curie’s research on radioactive substances proved to be ground-breaking, but was carried out under poor conditions and supported by a heavy teaching commitment. They were the first to use the term ‘radioactivity’ to describe this newly discovered property of emitting rays. They found that pitchblende (now called uraninite) was more radioactive than uranium and must therefore contain one or more other radioactive substances. In 1898, their development of techniques to refine pitchblende led to the discovery of two rare elements: radium and polonium. Pierre and Marie were both enthusiastic advocates of therapeutic applications of radioactivity; Radium Institutes were later established in Paris, London and Warsaw to develop medical uses.

The Nobel prize was originally awarded only to Pierre, but he insisted his wife also be cited in recognition of her work. In 1906, he fell under a horse carriage and was killed. Marie succeeded him as Professor of General Physics at the Sorbonne, the first woman to hold the position. In 1911, she was awarded the Nobel prize in chemistry for the discovery of radium and polonium, and for research into the properties of radium.

Both Pierre and Marie experienced radiation poisoning. Marie died of aplastic anaemia in 1934. Their laboratory books are still too radioactive to handle without protection. Their daughter Irene, also a physicist, and her husband Frédéric Joliot-Curie were also both eminent scientists and were awarded the 1935 Nobel prize in chemistry for the discovery of artificial radioactivity; both probably died of radiation poisoning.

Units of radioactivity were named after Becquerel and the Curies in their honour. A becquerel is defined as one disintegration per second; a curie (now discontinued) is 3.7 x 1010 becquerels.

Further reading

Marie Curie: biographical. The Nobel Prize (accessed March 2019).

Pierre Curie: biographical. The Nobel Prize (accessed March 2019).

Marie and Pierre Curie: a marriage of true minds. Marie Curie Blog. 10 February 2015 (accessed March 2019).

The dawn of the 20thcentury shed little light on how best to treat haemophilia. Medical and scientific journals had published several accounts of generations of families affected by haemophilia and, when treatment was mentioned, most had reported great difficulty – or failure – in controlling bleeding.

In 1803, for example, the Philadelphia physician John Otto listed bark, astringents (topically and internally), strong styptics, opiates and oral sulphate of soda (sodium sulphate) as the measures employed to stem bleeding.1 Only sodium sulphate proved effective – a finding that others confirmed.

In 1840, the London physician Samuel Lane recorded how he had tried to promote the formation of a clot by applying ‘gum tragacanth and beaver gathered from a hat’.2 Success was temporary: the clot was soft and broke away with renewed bleeding.

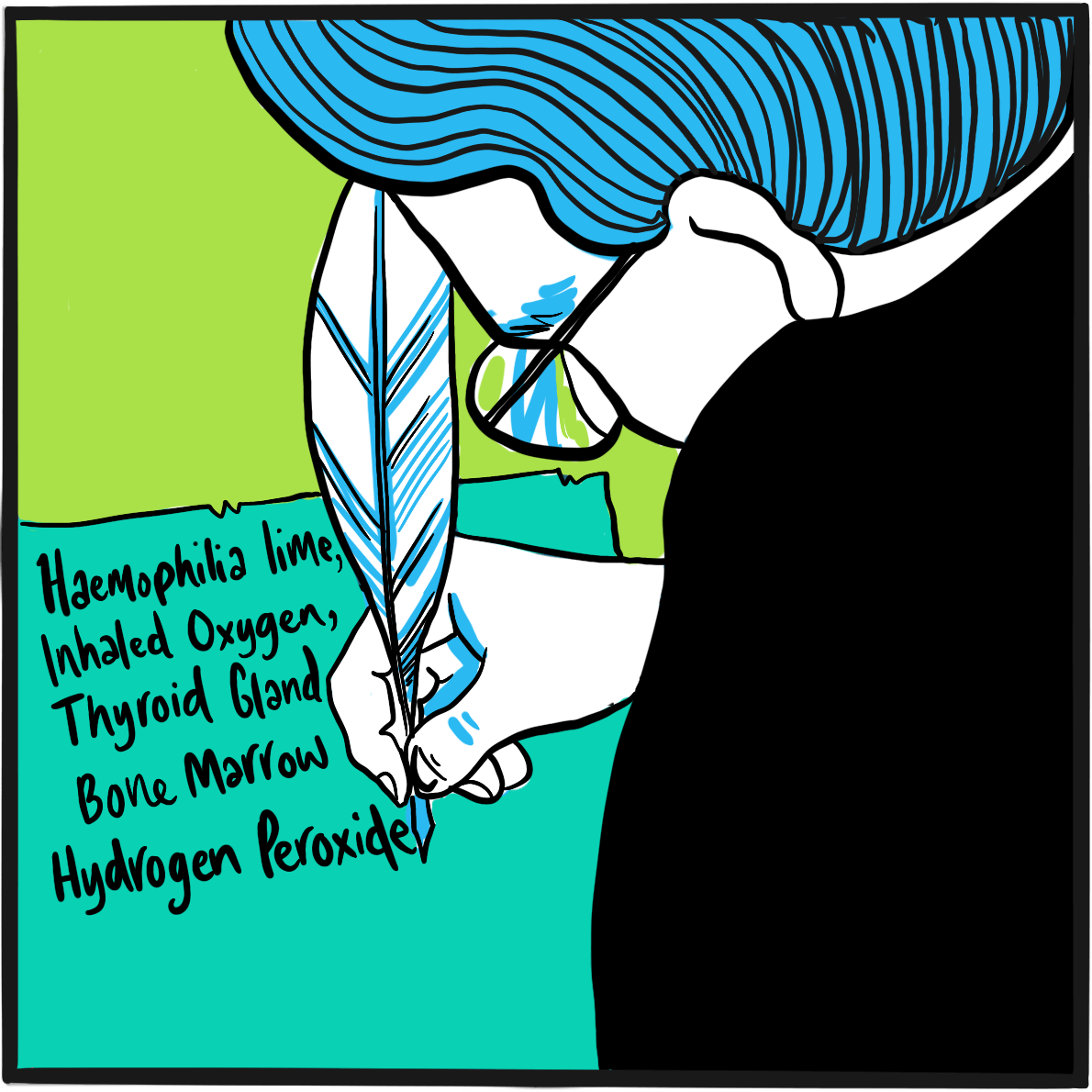

It is thanks to the efficiency of the army and its enthusiasm for record-keeping – or that of its librarians, at least ‒ that we have a picture of the remedies in vogue at the turn of the century. The 1901 index-catalogue of the library of the Surgeon-General’s Office, United States Army, lists the publications about haemophilia held by the library.3 The titles reveal the variety of interventions that doctors considered worth reporting. They include lime (calcium oxide and/or hydroxide), oxygen, thyroid gland or extract, injection of serum, hydrogen peroxide, gelatin (including by injection), suturing, compression, calcium chloride and bone marrow.

The London medical historian G.I.C. Ingram observed that more treatments were listed in the following years, some topical to promote clot formation, others systemic.4 They include injection of sodium citrate, calcium lactate or of Witte’s peptone (a protein hydrolysate), anaphylaxis, splenectomy, the ‘galvanic needle’ (passage of electric current via a wire), animal and human serum, adrenaline, the ‘muscle jostles’ of a bird, x-rays, transfusion of the patient’s own blood, oral tissue fibrinogen, female hormones, oxalic acid, a ‘bromide extract of egg white’ and the venom of Russell’s viper (which promotes thrombosis by activating factor X). Ingram notes that viper venom was the only treatment to undergo systematic evaluation. Robert MacFarlane, a junior demonstrator of pathology at London’s St Bartholomew’s Hospital (who would go on to describe the enzyme cascade underpinning coagulation and to identify haemophilia B), and Burgess Barnett, curator of reptiles at the Zoological Society of London, showed that a very dilute solution of the venom stopped bleeding immediately when applied topically.5

Although the first successful use of blood transfusion to treat bleeding due to haemophilia was published in 1840,2 it was not until 1926 that the Surgeon General’s catalogue included publications documenting its use.

References

- Otto JC. An account of an hemorrhagic disposition existing in certain families. Reprinted from The Medical Repository and Review of American Publications on Medicine, Surgery and the Auxiliary Branches of Science 1803, vol. 6, 1‒4. Clin Orthop Relat Res 1996; 4‒6 (accessed 26 February 2019).

- Lane S. Haemorrhagic diathesis. Successful transfusion of blood. Lancet 1840; 35: 185‒8. doi: 10.1016/S0140-6736(00)40031-0.

- Library of the Surgeon-General’s Office (US). Index-catalogue of the Library of the Surgeon-General’s Office, United States Army. Authors and subjects. Series 2, vol. 6. Washington: GPO, 1880‒1932 (accessed March 2019)

- Ingram GIC. The history of haemophilia. J Clin Path 1976; 29: 469‒9 (accessed March 2019).

- Macfarlane RG, Barnett B. The haemostatic possibilities of snake venom. Lancet 1934; 2: 985‒7. doi: 10.1016/S0140-6736(00)43846-8.

In 1914, there existed 194 treaties providing arbitration for international disputes and 400 peace organisations worldwide ‒ 46 in Great Britain and 72 in Germany. In 1913, the Dutch had opened a Palace of Peace to host treaty conferences. Peace was in abundance, but no one was surprised when the British Empire declared war on Germany at 11 pm on 4 August.

Europe festered with rivalry and bitterness. Since 1900, Germany had invested heavily in its navy to match British sea power, but was running out of money. France, Russia and Britain signed alliances despite overt rivalry in the expansion of their colonies. As early as 1908, a German military, beyond government control, had developed the Schlieffen Plan for a rapid invasion of Belgium, then defeating France before turning on Russia. In 1912‒13, smaller countries aligned to one side or the other ‒ Serbia, Bulgaria, Romania, Greece, Montenegro and Turkey ‒ were at war. The Balkans were an open sore between Austria-Hungary and its ally Germany, and Russia, supported by France.

On 28 June, Archduke Franz Ferdinand, heir to the thrones of Austria and Hungary, and his wife Sophie, were murdered by a Serb-trained assassin, lighting the fuse to what should have been, but never was, the ‘war to end all wars’. Both sides believed they could win, though Germany feared its military power would be outstripped by France unless it acted. It implemented the Schlieffen Plan, overrunning Belgium and declaring war on France on 3 August. Britain saw no choice but to side with France.

We look back in horror at what followed, but at the time the war was seen – in Britain at least – as a fight for freedom and a better world. Even so, there was little by way of gung-ho enthusiasm at the prospect of war. Despite the ‘Your Country Needs You’ propaganda, those who could remember the events of the American Civil War of 1861– 865 and the Boer War of 1899–1902 understood that the traditional tactics of trench warfare and cavalry charges were suicidal in the new era of mechanical weaponry.

By a quirk of the Gregorian calendar, 1916 provided an extra day for futile slaughter: 29 February was the ninth day of the Battle of Verdun, a ten-month campaign by the German military specifically to tie down and destroy the French army. Verdun, a town near the border with Belgium and Germany, was strongly fortified and came to represent French national pride; there would be no surrender. In June, the Russians launched an offensive that would divert German military resources, and further north, in July, the British and French began the Battle of the Somme. The German army was eventually forced to withdraw from Verdun to meet these challenges.

The casualty rate at Verdun is not certain, but is estimated to have totalled between 650,000 and 750,000, of whom perhaps 300,000 men were killed or missing. No ground was won or lost, though the battle was probably decisive in shoring up French resolve.

In the 1,560 days of the war, nine million soldiers were killed in Europe alone, including one third of all British men who were aged 19–22 when the conflict started. Douaumont, one of the defensive forts at Verdun, is now a memorial and the site of an ossuary (www.verdun-douaumont.com) housing the remains of 130,000 soldiers from the battlefield.

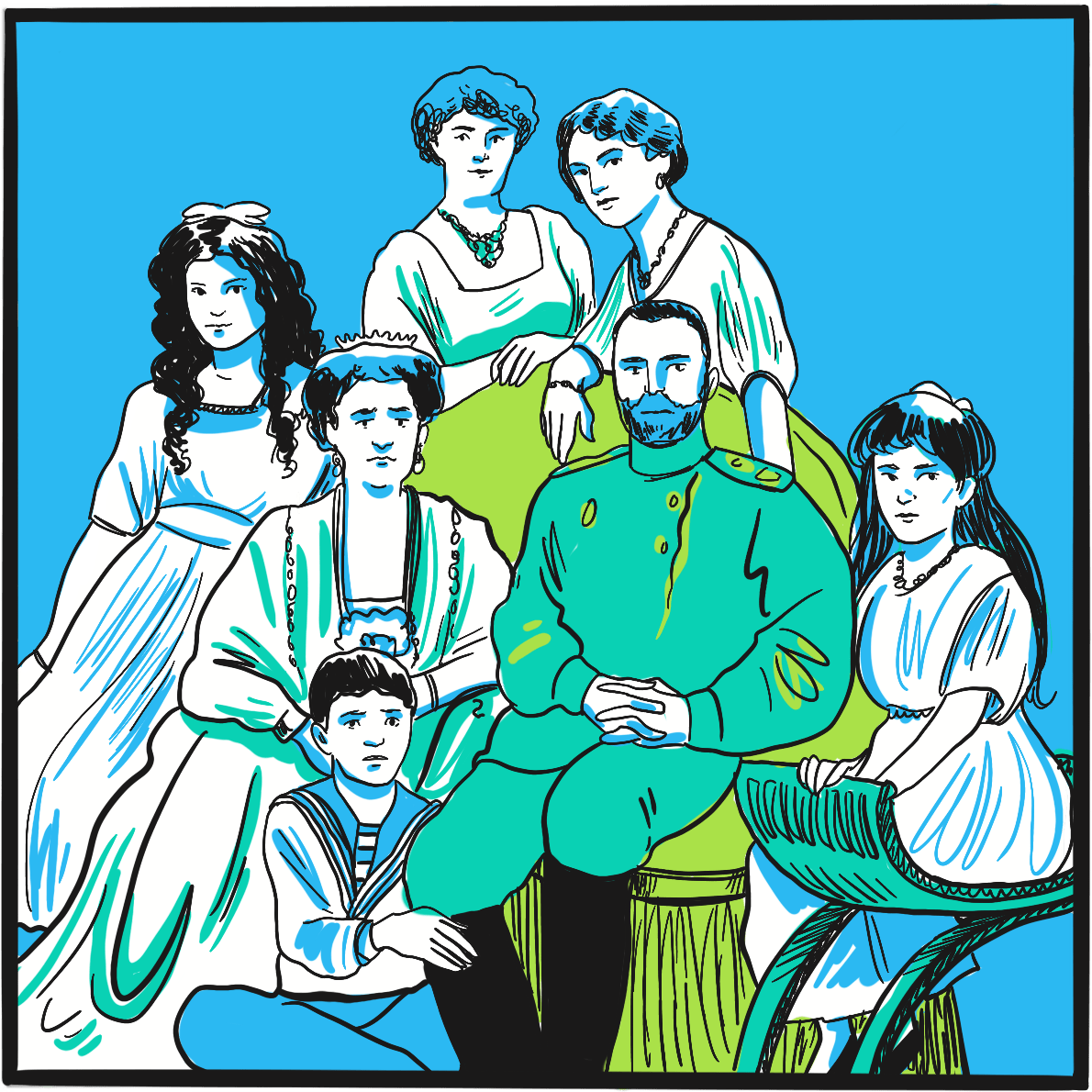

Often described as the most famous person with haemophilia, Alexei Nikolaevich, the Tsarevich of the Russian empire at the beginning of the 20thcentury, had the misfortune to be born into the royal Romanov family at a time when inherited wealth and privilege became distinctly unpopular.1

Alexei was born in 1904, the son of Alexandra Feodorovna, a German aristocrat and the granddaughter of Queen Victoria, and Tsar Nicholas II. He was their fifth child and first son ‒ and, as late-arriving heir to the crown, bore the considerable weight of the empire’s hope and expectation on his shoulders. Alexandra, as a result of her descent from the British royal family, was a carrier of haemophilia. She had not been a popular choice for Nicholas and, with the risk of haemophilia well known at the time, the marriage was opposed by others in the royal family.

It was apparent shortly after birth that Alexei had haemophilia. This would have been perceived by the population as a judgement from God for some failing of the ruling class, and every attempt was made to keep the fact secret. He grew up cosseted and protected, but many accidents and bleeding episodes are documented, at least one of which resulted in Alexei receiving the last rites. Partly to cope with this, Alexandra removed herself from public life, increasing her unpopularity.

Conventional medicine had little to offer, and it is believed this was one factor behind the family’s attachment to Grigori Rasputin. The peasant healer is reputed to have achieved several miraculous cures when Alexei was ill, including one via a telegram sent from Siberia. Rasputin’s closeness to the family and his growing political influence caused resentment among the aristocracy, resulting in his murder in 1916.

In 1917, as Russia suffered during the First World War, public unrest stoked by food rationing culminated in the February Revolution. The army sided with the Bolsheviks and Nicholas was forced to abdicate. The new government wanted him to hand power to Alexei, who they believed would be easily controlled. However, Nicholas knew the boy was not strong enough and abdicated in favour of his less pliable brother, Michael. For his part, Michael knew he could not keep power and soon abdicated himself.

The family were imprisoned in Yekaterinburg, a city about 1,600 miles from St Petersburg, until April 1918, when victory by the anti-Bolshevik White Army threatened. Alarmed by the possibility that the royals might be reinstated, the government ordered their execution. It was a brutal and bloody affair: the family had sewn jewels into their clothing for safe-keeping, which prolonged the carnage by deflecting some of the bullets and bayonets. Even the 13-year-old boy with haemophilia wouldn’t die until he was shot in the head.

The bodies were buried before the White Army took the city eight days later. The remains were discovered in 2007 and identified by DNA testing a year later. It was then confirmed that Alexei had haemophilia B, just like the other affected royals.2

It is arguable that haemophilia was instrumental in the demise of the Russian royal family.1Alexei’s haemophilia contributed to the Romanovs’ close relationship with Rasputin and to Alexandra’s withdrawal from public life; and it influenced Nicholas’s decision not to hand power to his son.

References

- Brown A. The Royal Disease and The Royal Collapse: Political Effects of Hemophilia in the Royal Houses of Europe. 2017. Student research. 63. DePauw University (accessed February 2019).

- Green P. The ‘royal disease’. J Thromb Haemost 2010; 8: 2214–5. doi: 10.1111/j.1538-7836.2010.03999.x.

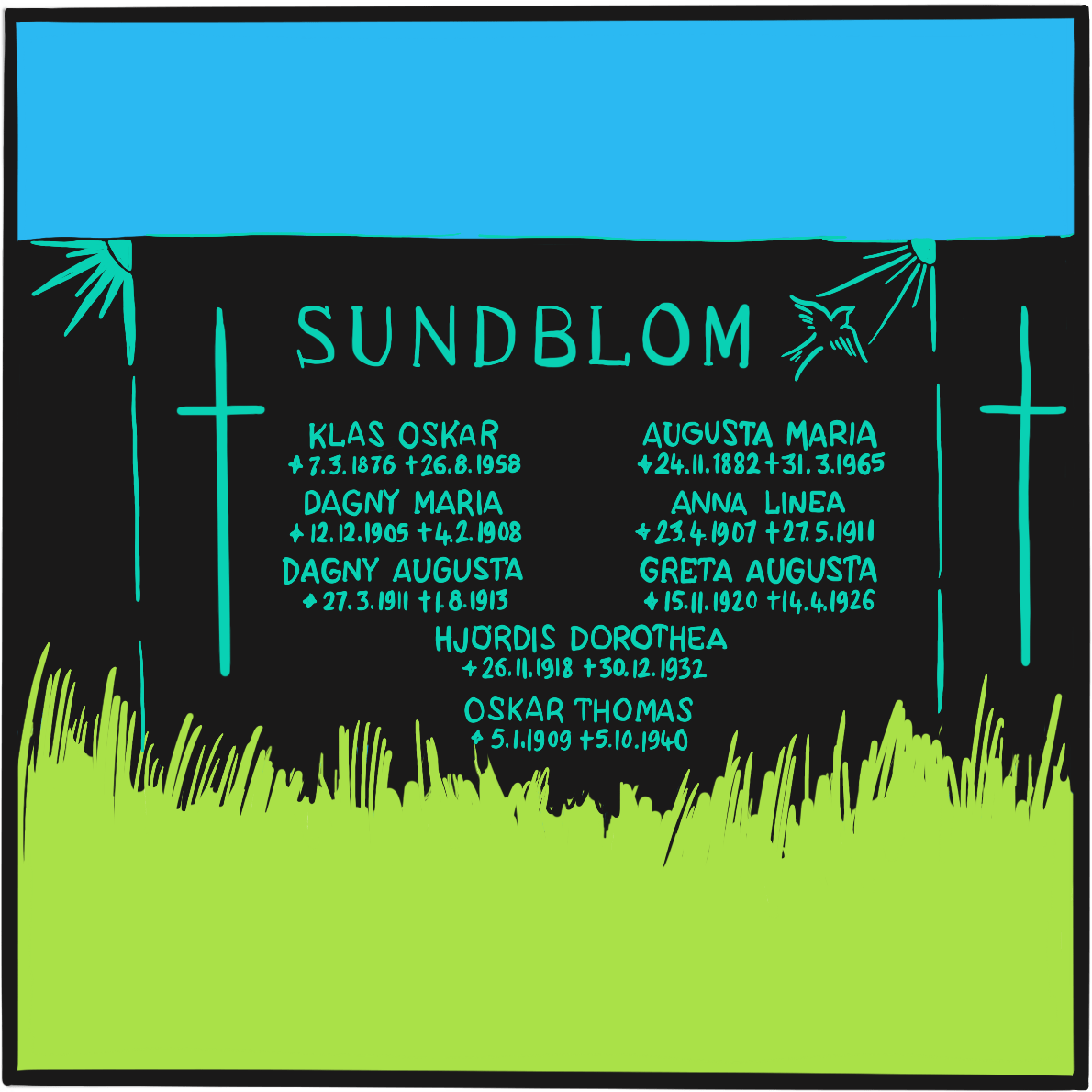

In April 1924, a five-year-old girl called Hjördis attended a clinic at the Deaconess Hospital in Helsinki, Finland. She came from the Åland Islands, situated about halfway between Helsinki and Stockholm, Sweden. She had a history of severe bleeding from the nose, after tooth extraction and from the ankle, and had been hospitalised for ten weeks at age three due to bleeding from a deep wound in her lip.

Hjördiswas one of 11 children, of whom seven experienced excessive bleeding and three had died. The physician she had seen at the clinic was the hospital’s physician-in-chief, Dr Erik Adolf von Willebrand, a well-regarded doctor with a long interest in haematology and in blood clotting in particular. He found that Hjördis’s blood count was normal, as was her clotting time (an indicator of thrombin formation); she had slight anaemia and slight thrombocytopenia. But, unusually, her bleeding time (which depends on vasoconstriction and platelet function) was two hours – at least ten times longer than expected.Von Willebrand concluded that her disorder was the result of platelet dysfunction, combined with a defect of the vascular wall.

Von Willebrand obtained the co-operation of a local teacher to document the family history, and discovered that the bleeding disorder affected 23 of 66 family members. By contrast with haemophilia, the disorder affected both women and men. Bleeding did not affect muscles and joints, but mostly the mucosae, causing epistaxis, oral bleeding, bruising, heavy menstrual bleeding and excessive bleeding after childbirth. His findings, published in 1926, described a disorder he labelled pseudohaemophilia.Hjördis died from menstrual bleeding, aged 13.

Von Willebrand wasn’t the first to describe a bleeding disorder affecting both women and men – the earliest account seems to have been in 1852, though the cases were labelled haemophilia. However, he provided the best early scientific description, and went on to collaborate with other Scandinavian physicians and publish distinguished research. In recognition of this work, the disorder was named von Willebrand disease between the late 1930s and early 1940s, by which time more cases had been reported.

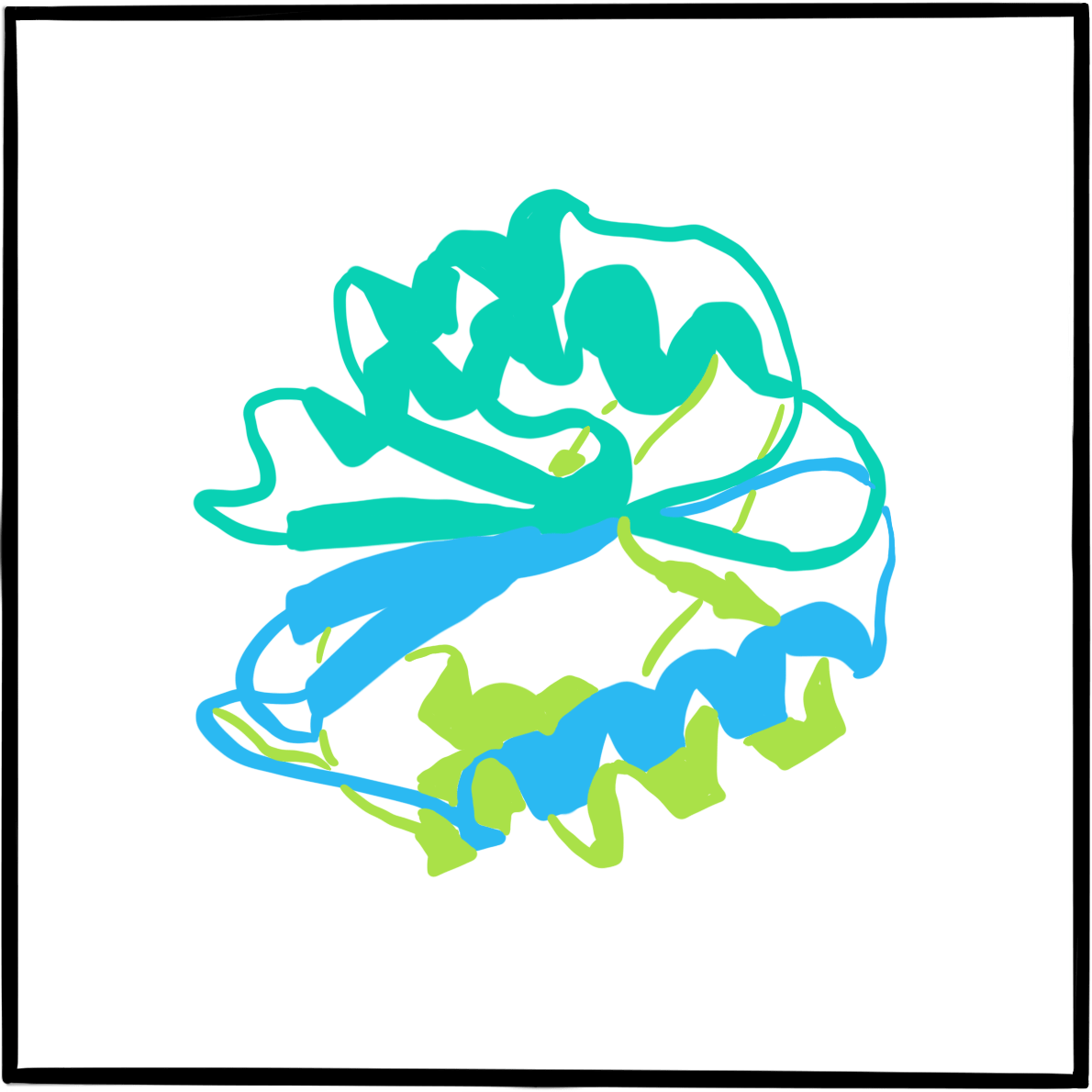

It was not until 1971 that the disorder was attributed to dysfunction of a large protein that acts as a carrier of factor VIII; the protein was christened von Willebrand factor (VWF). Further research in the 1970s and 1980s identified several subtypes of von Willebrand disease, characterised by different molecular defects. The VWF gene was cloned in 1985.

Further reading

Berntorp E. Erik von Willebrand. Thromb Res 2007; 120: S3–S4. doi: 10.1016/j.thromres.2007.03.010.

Federici AB, Berntorp E, Lee CS. The 80th anniversary of von Willebrand’s disease: history, management and research. Haemophilia 2006; 12: 563–72. doi: 10.1111/j.1365-2516.2006.01393.x.

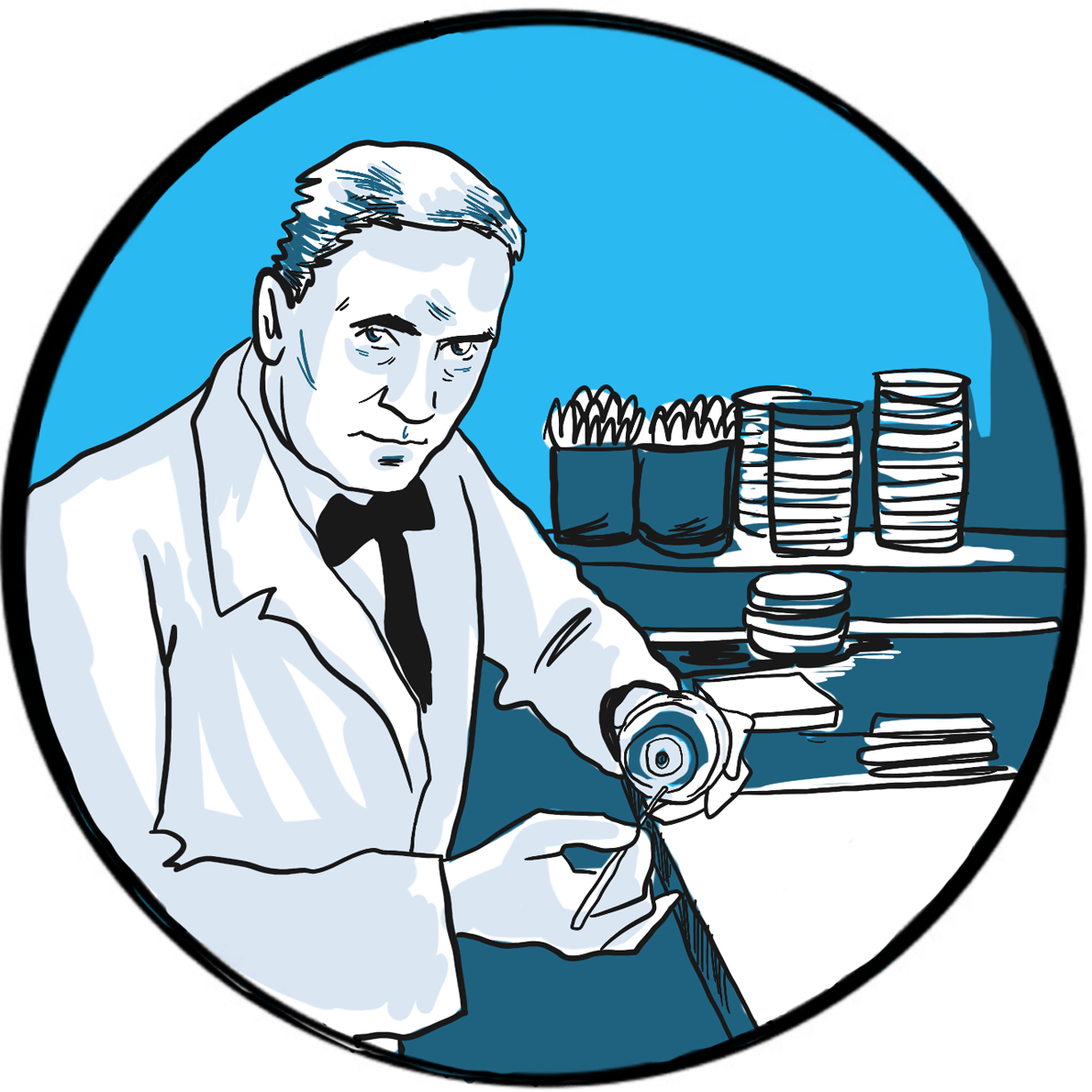

In 1929, Alexander Fleming, a 48-year-old microbiologist working at St Mary’s Hospital, London, published On the antibacterial action of cultures of a penicillium, with special reference to their use in the isolation of b. influenzae.1

Fleming’s paper described his observation a year earlier that bacterial growth in culture plates was inhibited by the presence of a contaminant mould, Penicillium. He is said to have been an untidy worker and had left the plates exposed to the air when he went on holiday.

Reasoning that the mould was secreting an antibacterial substance into its environment, Fleming tested other moulds for similar activity and found none. He prepared a filtrate from a broth culture of Penicillium and determined its solubility, stability to heat and its activity after dilution. He named the filtrate ‘penicillin’ and found it inactive against some bacteria, including the enterococci and Haemophilus influenzae, but highly active against Gram-positive bacteria including staphylococci and streptococci. He tested its toxicity in mice and the human eye and found it safe, concluding: “It is suggested that it may be an efficient antiseptic for application to, or injection into, areas infected with penicillin-sensitive microbes.”

Fleming was not the first to describe the antibacterial activity of a mould.2It had long been known that some moulds had antiseptic properties. In the second half of the 19thcentury, several accounts from Europe and South America reported that Penicilliuminhibited bacterial growth. In 1897, French PhD student Ernest Duchesne presented a thesis in which he showed that injecting a Penicillium broth into guinea pigs protected them against infection; his work was ignored. Fleming himself acknowledged the work of the Belgian microbiologist AndréGratia, who had published his research on the antibacterial activity of Penicilliumin 1924 but had been unable to pursue his research due to ill health.

Even after Fleming’s paper, development of the therapeutic applications of penicillin was slow: it was difficult to produce in quantity and Fleming was more interested in exploring its physicochemical properties. Australian-born Howard Florey, chair of pathology at Oxford University, and the German-born biochemist Ernst Chain repeated Duchesne’s work in 1938 and began to produce small quantities of penicillin.3,4 They successfully treated the first patients in 1941.5

It was not possible to scale up production of penicillin in wartime Britain, so Florey and Chain travelled to the United States where, with the support of John Fulton, a professor of physiology at Yale University, the large scale process was developed. Florey declined to patent the production process.

Fleming, Florey and Chain shared the 1945 Nobel prize in physiology or medicine “for the discovery of penicillin and its curative effect in various infectious diseases.”

References

- Fleming A. On the antibacterial action of cultures of a penicillium, with special reference to their use in the isolation of b. influenzae. Br J Exp Pathol 1929; 10: 226–36 (accessed March 2019).

- Arseculeratne SN, Arseculeratne G. A re-appraisal of the conventional history of antibiosis and penicillin. Mycoses 2017;60: 343–7. doi: 10.1111/myc.12599.

- Kyle RA, Steensma DP, Shampo MA. Howard Walter Florey ‒ production of penicillin. Mayo Clin Proc 2015; 90: e63-e64. doi: 10.1016/j.mayocp.2014.12.028.

- Chain E, Florey HW, Gardner AD, et al. Penicillin as a chemotherapeutic agent. Lancet 1940; 236: 226‒8. doi: 10.5555/uri:pii:S0140673601087281.

- Abraham EP, Chain E, Fletcher CM, et al. Further observations on penicillin. Lancet 1941; 238: 177‒89. doi: 10.5555/uri:pii:S0140673601087281.

The major economies of the world enjoyed a short period of prosperity and sociopolitical enlightenment in the years immediately following the end of hostilities in 1918‒but it was a future built on shaky foundations. The Allies had imposed the Treaty of Versailles on an unwilling Germany, many of whose people were not reconciled to their defeat in the war of 1914–1918. Britain and France were struggling to meet the costs of their victory, but the reparations provided for in Versailles were not adequate and, worse, fuelled further resentment in Germany. The United States had bankrolled Europe’s war effort and its defensive trade policy made it difficult for the old order to repay its debts.

American avarice and regulatory disinterest brought down the global economy with the Wall Street Crash in 1929. European countries lacked the resilience to weather the storm, none more vulnerable than Germany, which relied on the US loans now being recalled. The result was bank failures, unemployment and mass hunger. Over the next four years, successive German administrations tried and failed to restore the economy, ultimately resorting to the introduction of government by decree. Faith in democracy waned and the electorate turned to extremist parties – communist and fascist – for solutions. The fascists prevailed.